Anaconda, an infrastructure supplier for the Python group for over a decade, has launched into public beta Anaconda Desktop, a single software designed for AI improvement.

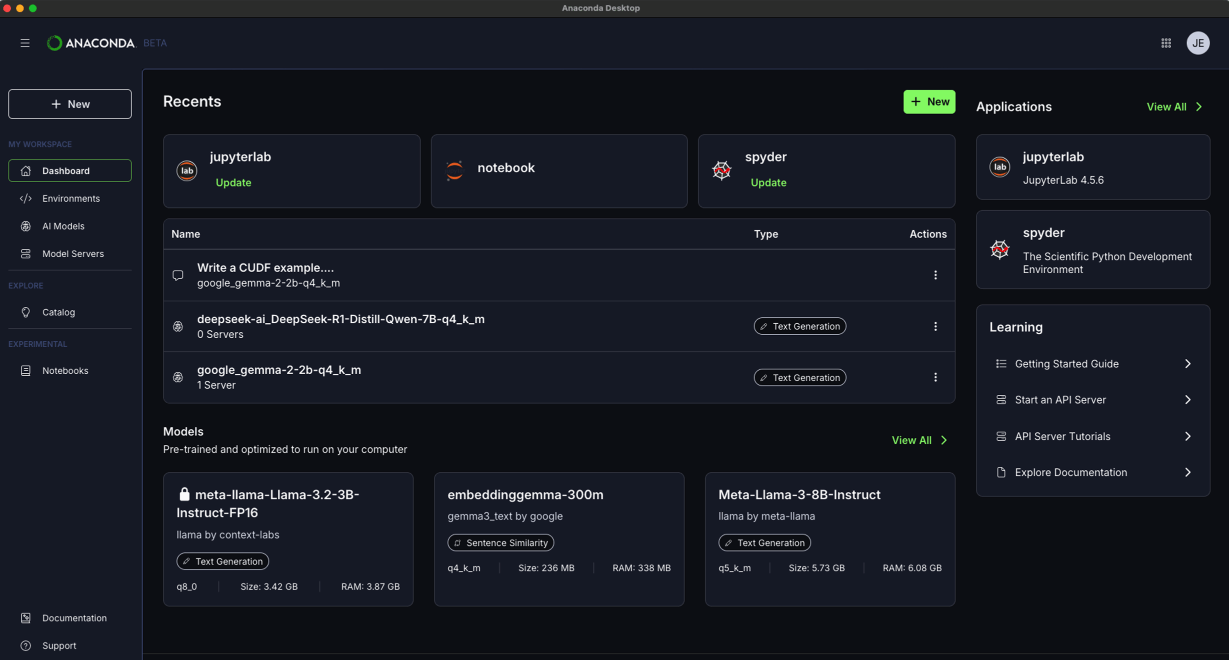

The applying is constructed to unify the beforehand fractured workflow of managing massive language fashions (LLMs) by bringing mannequin discovery, native inference, and conda atmosphere administration collectively in a single place. It serves as a centralized floor, changing cobbled-together options typically used for native AI stacks, which usually concerned a separate mannequin hub, an inference instrument, and an API layer.

The instrument is geared toward information science college students, researchers, and engineers who beforehand relied on Anaconda Navigator to get their work off the bottom.

The discharge addresses the trendy complexity of knowledge science and improvement, the place LLMs have moved to the middle of tasks, forcing builders to handle servers and API layers alongside their package deal managers. Anaconda Desktop solves this by extending the performance of the outdated Navigator to the complete AI improvement workflow, aiming to speed up developer velocity virtually and securely. Every little thing constructed into Anaconda Navigator stays: creating and managing conda environments, putting in packages, launching Jupyter Notebooks, and extra. However now, native AI capabilities sit proper alongside them.

The corporate additionally famous that new options are deliberate for later this summer season, together with the power to deploy and handle a number of inference endpoints. The corporate is also working to expand Anaconda MCP to offer AI brokers direct, ruled entry to the conda ecosystem. Anaconda additionally famous that Navigator will likely be supported by way of the tip of 2026 for current customers, however is urging customers to maneuver to Desktop.

The applying is offered for obtain on Home windows, Mac, and Linux machines. It serves as a centralized floor, changing “cobbled collectively options” typically used for native AI stacks, which usually concerned a separate mannequin hub, an inference instrument, and an API layer.