Xiaomi is the newest firm to launch an open-weight AI mannequin – MiMo-V2.5 claims to be a “main step ahead in agentic functionality and multimodal understanding.”

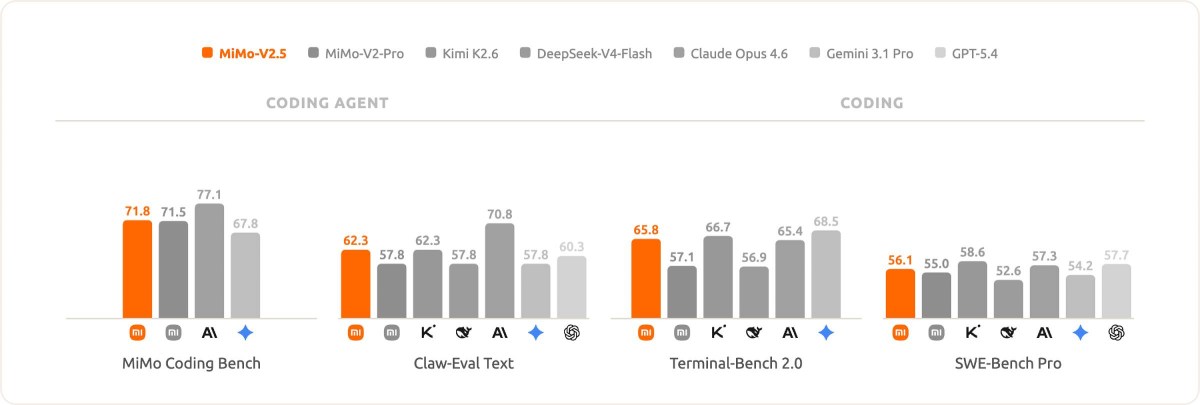

Xiaomi has shared numerous benchmark outcomes that evaluate MiMo-V2.5 in opposition to the likes of the not too long ago launched DeepSeek-V4, Kimi K2.6, Claude Opus 4.6, Gemini 3.1 Professional and Xiaomi’s older MiMo-V2-Professional.

The corporate claims that MiMo-V2.5 achieved best-in-class efficiency on its in-house agentic duties benchmark. On the inner MiMo Coding Bench, the smaller V2.5 mannequin matched the bigger V2.5-Professional at half the price. In different benchmarks that check the mannequin’s picture and video understanding, V2.5 is stage with closed-source fashions, says Xiaomi.

MiMo-V2.5 evaluated on coding and agentic duties.

The mannequin was skilled on 48 trillion tokens and is natively multimodal with assist for textual content, picture and video information. Xiaomi has printed two variations: MiMo-V2.5 with 310B whole parameters (15B energetic) and MiMo-V2.5-Professional with 1.02T whole parameters (42B energetic). The mannequin helps 1 million tokens of context.

MiMo-V2.5 evaluated on picture and video understanding

You may obtain the mannequin from Hugging Face and run it your self, however you will want one thing like a kitted-out Mac Studio to do it – client GPUs don’t have sufficient VRAM (no, not even the Nvidia RTX 5090).

You may check out Xiaomi MiMo-V2.5 within the AI Studio (which doesn’t load on the time of writing) or use it through the official API. Or, as talked about above, obtain it and run it regionally, if in case you have the means to take action.