The submit Local Guardrails for Secrets Security in the Age of AI Coding Assistants appeared first on GitGuardian Blog – Take Control of Your Secrets Security.

Software program provide chain safety used to really feel like an issue that lived elsewhere.

The repository and construct system have been prime of thoughts. Package deal registries, steady integration and steady supply pipelines, launch automation, cloud platforms, and artifact shops additionally grew to become the main focus of concern. These nonetheless matter and want safety, however the assault floor has shifted nearer to the place builders work daily.

The developer laptop computer is now not simply the place the place code will get written. It’s a part of the availability chain.

The safety implications are simple to underestimate. A contemporary workstation touches supply code, bundle managers, cloud accounts, registry tokens, safe shell keys, service accounts, construct scripts, synthetic intelligence coding assistants, terminals, native caches, and atmosphere information. It’s the place credentials are created, copied, examined, logged, and too typically forgotten.

Attackers perceive this. They don’t seem to be solely on the lookout for a weak manufacturing service or a poisoned construct step. They’re on the lookout for the entry materials that lets one system belief one other.

We have now to replace our protection fashions. Safety can’t wait till code reaches a distant repository or a pipeline. By then, a credential could already be in Git historical past, a mannequin immediate, an area log, a construct artifact, or a bundle set up script’s attain.

The management level has to maneuver earlier within the software program creation course of.

The Widespread Thread Is Credential Theft

GitGuardian’s recent breach research factors to a transparent sample throughout software program provide chain assaults: attackers more and more goal the credentials embedded in developer workflows.

In April 2026, we analyzed three provide chain campaigns that affected npm, PyPI, and Docker Hub over a 48-hour period. The ecosystems and methods assorted, however the purpose was constant. Every marketing campaign centered on stealing helpful credentials from developer environments or steady integration and supply pipelines.

One compromised npm bundle used a postinstall hook to steal npm publish tokens, then used that entry to publish contaminated variations of packages the sufferer may attain. A PyPI marketing campaign harvested safe shell keys, cloud credentials, atmosphere variables, and crypto wallets. Throughout these campaigns, the attacker’s goal was clearly to gather legitimate entry and use it to succeed in the following system.

That is what makes the issue so damaging.

Builders Are Enticing Targets

A developer could have entry to supply management, cloud accounts, bundle registries, artifact shops, staging environments, incident instruments, and inside software programming interfaces. A construct runner could maintain deployment credentials, bundle publishing tokens, and entry to production-adjacent infrastructure. One uncovered token can grow to be a bridge throughout a number of layers of the supply course of.

That’s the reason credential publicity is totally different from many different bugs. An attacker doesn’t all the time want to use a software program flaw, keep persistence, or modify manufacturing code. Typically, authenticated entry is sufficient. Typically, a short-lived foothold on a developer machine can uncover a credential with broader attain.

The GitGuardian 2026 State of Secrets Sprawl report makes the dimensions of the state of affairs clear. We discovered over 28.6 million new secrets and techniques detected in public GitHub commits in 2025, a 34 % year-over-year enhance. Inside repositories are roughly six instances extra probably than public repositories to include hardcoded credentials. About 28% of incidents originate completely outdoors repositories, in collaboration methods akin to Slack, Jira, and Confluence.

The repository is now not the boundary. If a secret could be discovered on the machine in plaintext, it’s prone to unfold elsewhere in plaintext and be usable by anybody who finds it.

The Workstation Now Holds Too A lot Context To Ignore

Developer laptops are enticing as a result of they include context.

They maintain supply bushes, dotfiles, shell historical past, native atmosphere information, built-in improvement atmosphere settings, bundle supervisor configuration, construct artifacts, terminal output, AI agent logs, and momentary debugging notes. Many of those information are invisible throughout regular evaluate. Many by no means depart the machine. Some sit in directories that builders not often examine.

That makes native publicity tough to handle with repository-only controls.

A credential can seem in a .env file, get printed into terminal historical past, land inside a check config, present up in construct output, or be copied into an AI immediate throughout troubleshooting. None of that requires a malicious commit. None of it essentially triggers a centralized scanner. But every second can create actual entry danger.

We want, as an trade, to scan the locations the place credentials are collected outdoors Git. Undertaking workspaces, dotfiles, construct output, and agent folders can all retailer copied tokens, configuration blocks, troubleshooting output, and cached context. Attackers harvest this native knowledge as a result of it may lead on to legitimate entry.

The Shai-Hulud knowledge offers that concern weight. Throughout 6,943 compromised machines, it found 33,185 unique credentials, with a minimum of 3,760 nonetheless legitimate when first checked. That’s not a theoretical workstation drawback. It’s a sensible attacker workflow.

Compromise the machine. Search the context. Extract the entry. Transfer on.

The workstation has grow to be a safety boundary as a result of so many instruments assume the native atmosphere is trusted. Package deal managers run set up scripts. Extensions learn undertaking information. Terminals expose atmosphere variables. Native automation touches actual methods. AI brokers can learn information, run instructions, and summarize outputs.

Every of these actions could also be helpful, however every can even grow to be a path for unintentional publicity or malicious instruction.

AI-assisted improvement provides a more moderen layer to the identical drawback. AI coding instruments now work nearer to the developer’s information, terminal, editor, and atmosphere variables. A immediate can include a credential. A software can name and browse a delicate file. A generated command can print entry materials into logs or mannequin context. An agent can mix harmless-looking steps right into a dangerous motion.

The publicity floor is now not simply human typing plus code evaluate. It now contains the interplay between people, native instruments, automated brokers, and exterior companies. And as we present in our report, extra persons are utilizing coding assistants, and plenty of of them that do let the agent co-author their commits are leaking twice as many secrets per commit.

Safety controls have to satisfy the workflow the place it truly occurs.

Earlier Checkpoints Cut back Harm

Conventional provide chain controls nonetheless matter. Groups nonetheless want supply management protections, dependency scanning, safe steady integration and supply, artifact integrity, launch controls, and manufacturing deployment guardrails.

However these controls typically fireplace after the dangerous second has already occurred.

A developer could have created an area file with a credential. An AI assistant could have obtained delicate context. A bundle set up script could have learn atmosphere variables. A token could have entered native Git historical past earlier than it ever reaches a distant repository.

Rotating a credential after it reaches a shared repository can grow to be a full incident response train. Somebody has to establish possession, revoke the credential, problem a substitute, verify utilization, check dependent purposes, evaluate entry, clear historical past the place attainable, and doc the occasion. That work is important, however it’s costly.

Catching the identical problem whereas the developer remains to be enhancing a file is easier. Take away it. Exchange it with a secure reference. Maintain shifting.

The strongest mannequin treats credential detection as a steady developer-side management, not an occasional cleanup process. The software has to take a seat the place builders already work: within the editor, in Git hooks, within the terminal, and inside AI coding workflows.

Defending Your Builders’ Secrets and techniques

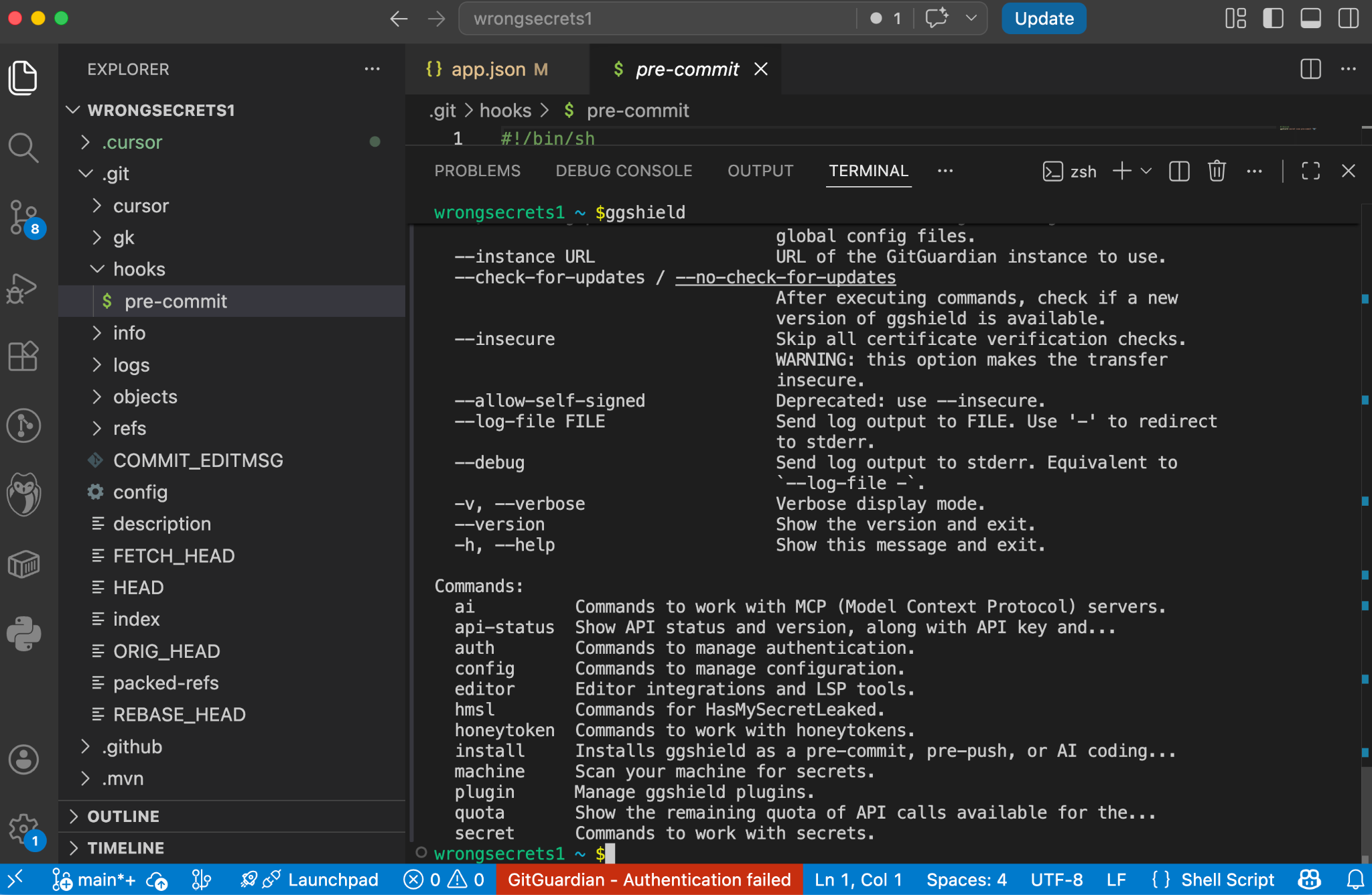

ggshield is the GitGuardian command-line interface for scanning developer workflows. You may run ggshield domestically or in steady integration environments, the place it offers guardrails throughout the software program improvement lifecycle and detects a whole lot of varieties of hardcoded credentials.

A neighborhood scan catches issues earlier than code strikes into shared infrastructure. A steady integration scan catches issues after code leaves the laptop computer. A pre-receive hook can stop a secret from being pushed to a shared repo or system. Utilizing the identical tooling throughout these factors offers groups consistency with out forcing builders right into a separate safety course of.

Git Hooks Add One other Layer Of Safety

Using gghsield pre-commit hooks means a scan runs earlier than Git creates a commit. Groups can configure it by the pre-commit framework, set up it domestically for particular repositories, or set up it globally throughout present and future repositories on a developer workstation.

The worldwide choice is necessary. Not each leak occurs in the principle codebase. Non permanent repositories, check folders, aspect tasks, cloned examples, and one-off experiments all create publicity. A repository-by-repository rollout leaves gaps. A worldwide hook offers the developer machine a broader default.

A pre-push hook catches a later second. It runs earlier than code leaves the machine for a distant repository. GitGuardian paperwork native, framework-based, and international set up modes for this management as properly. Collectively, pre-commit and pre-push hooks create two helpful gates: one earlier than native historical past turns into sturdy, and one earlier than code reaches shared infrastructure.

Discovering Secrets and techniques On Save

GitGuardian’s VS Code extension makes use of the bundled ggshield command-line interface to scan code as builders write or modify it. A scan is run robotically on saving a file. Findings are proven immediately and instantly contained in the editor by code annotations, standing bar warnings, and the Issues panel. This extension additionally works with Cursor, Antigravity, and Windsurf.

Safety controls fail when they’re too late, too noisy, or too far-off from the error. A great native management offers suggestions in context. It explains what occurred. It helps the developer repair the problem earlier than it turns into a ticket, a damaged construct, or an incident.

AI coding instruments deserve particular consideration as a result of they alter the place leakage can happen.

An AI workflow could expose delicate materials earlier than code exists as a file. A developer would possibly paste a credential right into a immediate whereas debugging. An agent would possibly learn an area configuration file. A software name would possibly execute a command that prints atmosphere variables. Output from that command would possibly then transfer into the mannequin context or native logs.

That may be a totally different path than conventional supply code leakage.

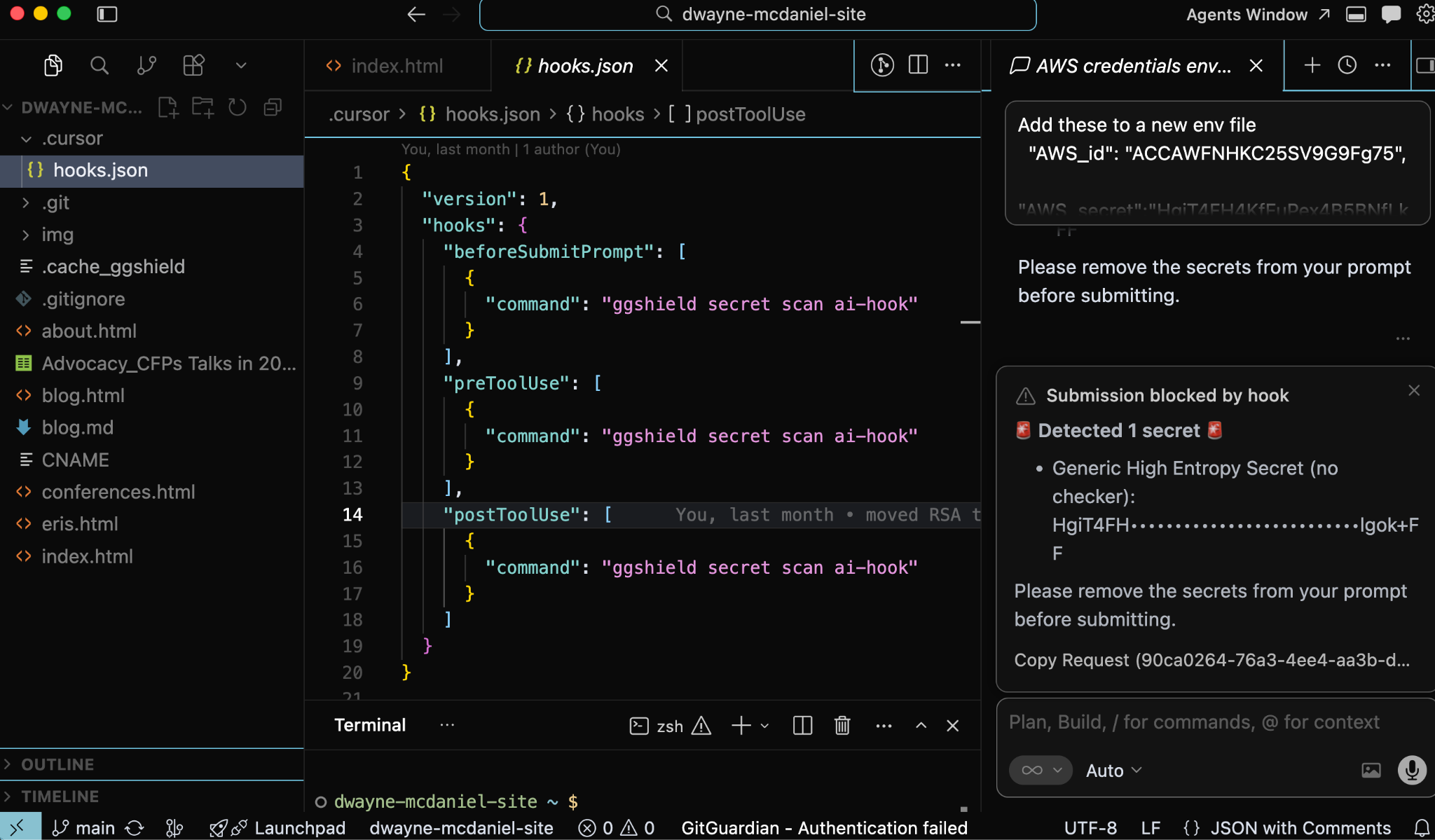

GitGuardian’s AI coding instruments integration addresses this by inserting controls contained in the hook methods of instruments akin to Cursor, Claude Code, and VS Code with GitHub Copilot. The mixing scans three phases: immediate submission, pre-tool use, and post-tool use.

Immediate submission scanning checks content material earlier than it reaches the mannequin and blocks the immediate when credentials are discovered. Pre-tool use scanning checks instructions, file reads, and Mannequin Context Protocol calls earlier than execution, blocking dangerous actions earlier than they run. Submit-tool use scanning checks outputs after execution and sends a desktop notification when credentials seem.

That construction matches how agentic instruments function.

The dangerous second could also be a immediate. It might be a file learn. It might be a shell command. It might be the output of a software the developer didn’t manually examine. A repository-only management sees too little of this movement. A hook contained in the AI workflow can cease publicity on the handoff level.

The editor catches the problem whereas the developer writes. AI hooks catch delicate materials earlier than prompts, software calls, or outputs transfer it someplace dangerous. Git hooks catch credentials earlier than they enter commit historical past or depart the laptop computer. Steady integration and server-side controls present backup as soon as code reaches shared methods.

Layered Prevention With out Forcing A Separate Workflow

Developer environments at the moment are related, automated, and more and more assisted by instruments that may act on native context. Safety has to account for that actuality. Ready for a distant scan is simply too late for credential publicity.

The higher mannequin is simple: discover credentials earlier, block them nearer to the place they seem, and scale back the possibility {that a} developer’s laptop computer turns into the best path into the software program provide chain.

GitGuardian’s ggshield, IDE extensions, AI hooks, and Git hooks all level towards that mannequin. They bring about detection into the locations builders already use, fairly than asking builders to depart their workflow for safety. They scale back the time between errors and suggestions. They offer groups a constant detection engine throughout native improvement, AI-assisted coding, Git workflows, and automation.

The provision chain now contains the workstation.

Deal with it that means.

*** This can be a Safety Bloggers Community syndicated weblog from GitGuardian Blog – Take Control of Your Secrets Security authored by Dwayne McDaniel. Learn the unique submit at: https://blog.gitguardian.com/local-guardrails-for-secrets-security/