In relation to software program builders, there are t a number of distinct varieties. For instance, the extroverted, chatty sort, who’s all the time going on the market to share the most recent and latest libraries and initiatives with everybody, and could be very a lot into bouncing concepts off others, no matter whether or not they know what you’re speaking about. Then there may be the introverted loner, who prefers to sort out programming challenges by bouncing issues round inside their very own minds and happening lengthy walks to mull issues over earlier than committing to something vital.

This results in fascinating situations in the case of management-enforced ‘optimization’ methods, like Pair Programming. This strategy includes two builders sharing the identical pc and keyboard, theoretically doubling the efficient output by some form of metric, however realistically typically resulting in a minimum of one facet feeling fairly depressing and disconnected except you set two of the chatty varieties collectively.

As a licensed introverted loner developer, the concept of utilizing an LLM chatbot as a coding assistant naturally triggers disagreeable flashbacks to hours of pressured awkward pair ‘programming’. Nevertheless, perhaps utilizing an LLM chatbot may very well be extra nice as a result of you may skip the entire awkward socializing bit. With the intention to give it a shake, I put collectively a bit of experiment to see whether or not LLM-based coding assistants is one thing that I might come to understand, in contrast to pair programming.

Setting Expectations

Any good experimental setup options clear targets and parameters that outline what might be examined and what the expectations are. Clearly I come from a considerably detrimental angle into this complete experiment, so to make it simple I’ll be selecting two pretty easy situations for the LLM to help with:

- C++ embedded coding for STM32 and CMSIS.

- Ada community growth.

These are matters that I’m pretty acquainted and comfy with, in order that I do know what questions I’ve right here, and what I’m roughly anticipating as output. I’ll be treating the chatbot for essentially the most half as I might use StackOverflow or nag individuals on IRC, with my fundamental concern being that it’ll expect pleasantries from me as an alternative of brutal and chilly professionalism. Ideally it’ll be a step above me hurling profanities at a search engine for clearly willfully misunderstanding what I’m searching for.

My expectations are that it’ll have some solutions for me for the questions I’ve about how one can do sure features of the duties, and will even produce half-way usable code that I can pretty simply perceive and double-check utilizing my typical documentation references.

This simply leaves one large query, being which LLM chatbot to select and how on earth any of it’s imagined to work, since I’ve averted the issues just like the proverbial plague.

Assembly the Crew

Though I’m conscious that everybody who’s into utilizing LLM-assisted programming appear to love to advertise LLMs like Claude, I’d ideally not be signing as much as one other service. This gorgeous a lot simply leaves GitHub Copilot, which I’ve entry to already. I’ve written about this explicit LLM chatbot fairly a bit because it was launched, with my typically detrimental emotions in direction of these instruments more and more backed up by analysis.

Biased I could also be, however to be a real scientist you might have to have the ability to put aside your biases for an experiment and settle for actuality within the face of recent proof. Thus, with all biases and doubts firmly pushed apart in favor of the aforementioned chilly professionalism, let’s get right down to brass tacks.

Micro Code

My pet undertaking for STM32-related programming has for some time been my Nodate project, involving using the CMSIS customary headers and the macros outlined therein so as to write issues starting from start-up to operating the Dhrystone benchmark and deciphering the assorted flavors of real-time clocks.

A lot of this work entails digging by datasheets, reference manuals and piles of reference code, in addition to throwing queries at serps to see what doubtlessly helpful outcomes percolate out of that exact useful resource. Coming throughout the trials and tribulations of fellow STM32 builders in discussion board threads and the like will be each heartening and disheartening, however all of it tends to condense into one thing that you should utilize to progress within the undertaking.

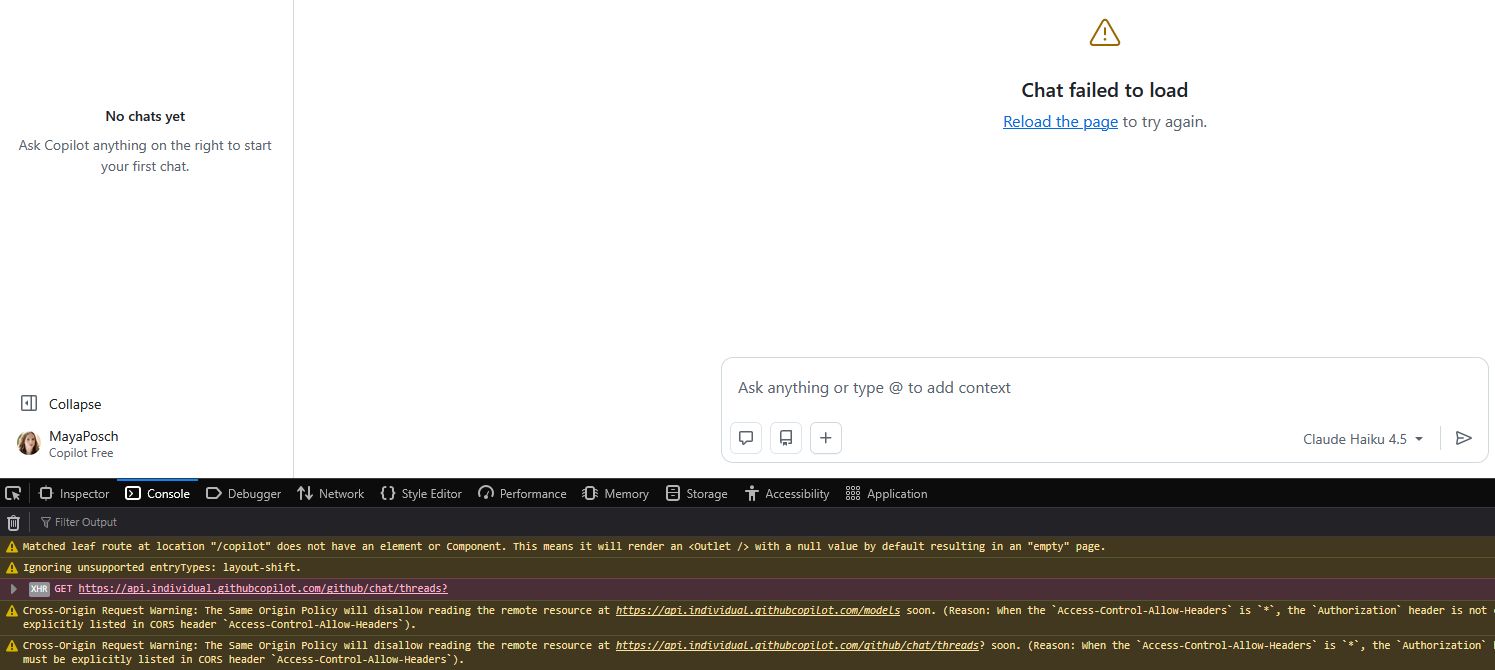

Maybe paradoxically, the second that I attempted to make use of the chatbot within the browser I received an error with the GitHub standing web page indicating that a few of their methods are down, together with these for Copilot.

This raises one other fascinating level: no matter whether or not an LLM chatbot makes for an excellent programming companion, a human companion doesn’t typically randomly keel over or develop into unresponsive within the midst of attempting to do some work collectively. In the event that they do, nonetheless, that’s completely a medical emergency and you need to name 911, 112, or your native equal emergency quantity stat.

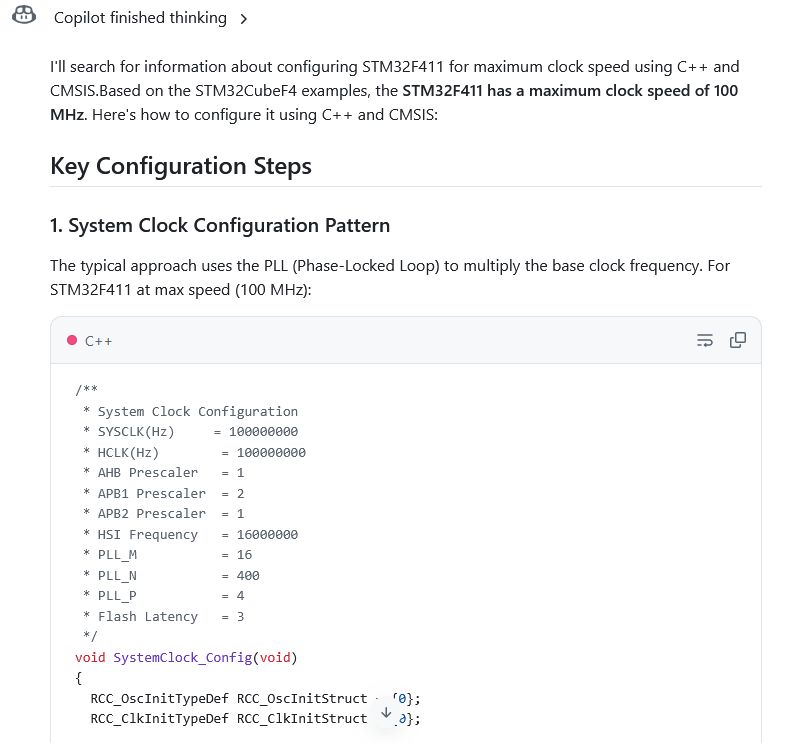

Anyway, after ready for companies to be restored, I used to be ultimately in a position to ask the chatbot how one can correctly set the clock pace on an STM32F411 MCU, after getting tripped up beforehand by the necessity to set the regulator voltage scaling (VOS) within the energy management register (PWR_CR). This can be a energy saving characteristic whose adjusting is required for hitting particular and clearly power-wasting clocks.

Shockingly, the chatbot fortunately spits out ST HAL code and ignores the ‘CMSIS’ bit, though you would perhaps argue that the ST HAL makes use of CMSIS inside. However then so does Arduino code for a lot of MCUs.

To its credit score, it does point out in a ‘Key CMSIS Necessities’ checklist that that you must set PWR_REGULATOR_VOLTAGE_SCALE1 but with out additional element on the place to set it. There may be additionally the tiny element that this isn’t even the CMSIS macro, which might be PWR_CR_VOS to set each bits for the complete vary.

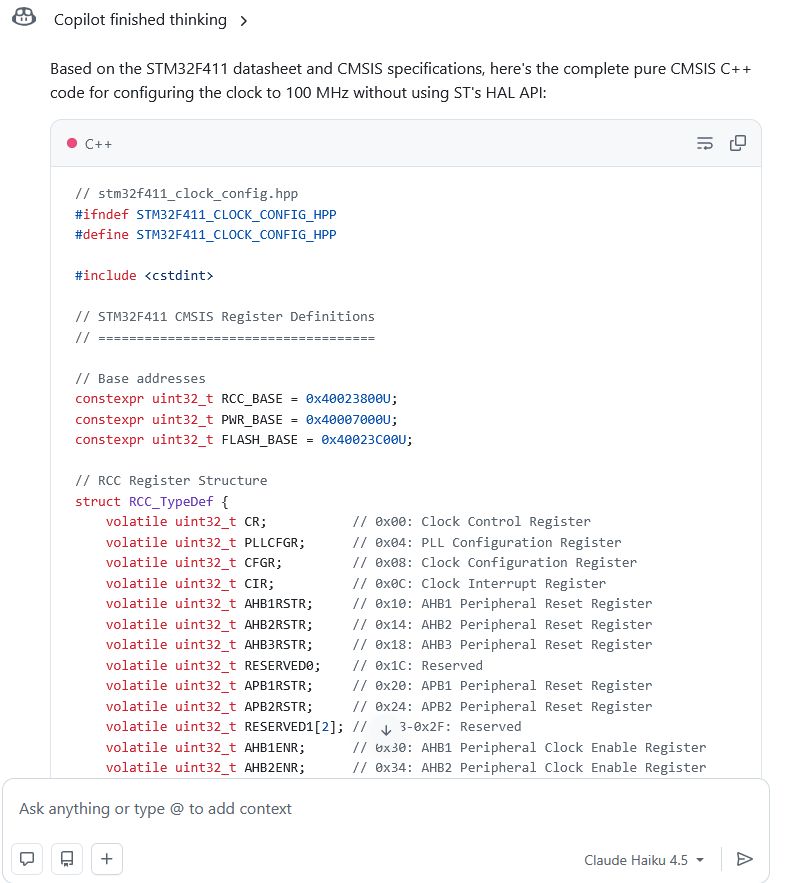

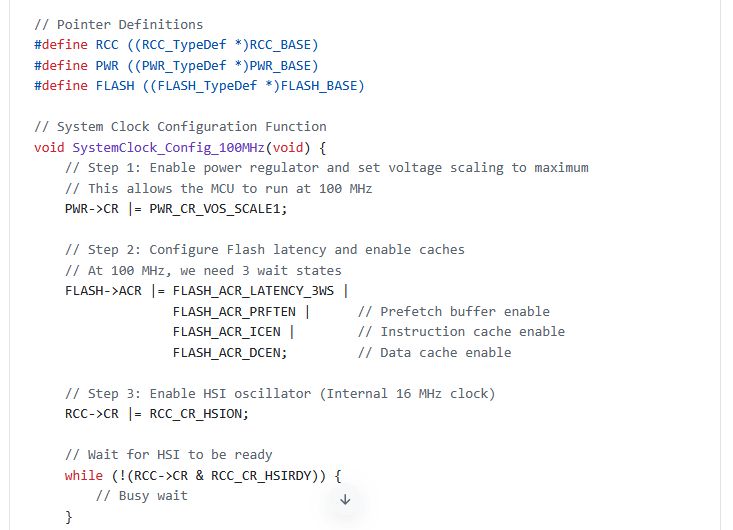

Thankfully we will do the digital equal of smacking the chatbot upside the pinnacle and inform it to do the factor we requested it to do. This being to offer the actual CMSIS model. Doing so ends in one other gobsmacking second when it fortunately spits out code that doesn’t trouble to incorporate the CMSIS headers, however merely copies each single used struct definition and extra into the code as properly, bloating it up massively:

That is in fact very annoying when it ought to have used #outline macros, and it clearly can generate embody statements primarily based on its inclusion of

I’m not totally certain the place it received the PWR_CR_VOS_SCALE1 factor from, with asking a pleasant search engine resulting in only a handful of outcomes, one of which is for an STM32F407 that runs at 168 MHz max. That is hilarious in gentle of the feedback proper above the code. It makes you marvel what instance code it pilfered from.

At this level I might in all probability proceed to select at this generated code, however suffice it to say that my confidence stage in its generated code and general output hovers someplace between ‘low’ and ‘backside of a black gap’. I’m more than pleased to flip this explicit desk, rage stop, and never lose what stays of my sanity.

Findings

Though I had supposed to additionally do some enjoyable porting to Ada along with my buddy Copilot of some C++ networking code in my NymphRPC distant process name library, I discovered my nerves to be sufficiently frayed and the bouts of near-hysterical laughter out of sheer disbelief worrisome sufficient to abort this try.

I additionally don’t really feel that it’d do rather more than hammer residence the purpose that GitHub Copilot on the very least doesn’t make for an excellent pair programming companion, nor as a programming device, or a search engine, or a lot of something. When the one factor that it received me was having to verify its output for very apparent errors and shaking my head in disbelief when I discovered them, it beggars perception that anybody would voluntarily use it.

Once we additionally received experiences that using such LLM chatbots are prone to degrade human cognition and demanding pondering expertise, to not point out the worrisome prospect of cognitive give up, then it’s in all probability finest to keep away from these chatbots altogether.

I additionally agree typically with Advait Sarkar et al. of their 2022 paper that you just can not actually do pair programming as-such with an LLM chatbot, however that it provides one thing completely different. One thing that’s very completely different from utilizing a search engine and digesting numerous articles and discussion board posts together with reference materials into one thing new.

Thus, after utilizing an LLM chatbot for some coding ‘help’ I’ll be fortunately scurrying again to my boring references and yelling invectives at serps.