Apple plans to rework Siri from a voice command shortcut right into a persistent AI layer working by search, the keyboard, third-party apps, and the Dynamic Island. In response to Bloomberg reporting relayed by MacRumors this week, the iOS 27 Siri redesign will give the assistant the power to reply open-ended questions drawing on net outcomes, learn what’s in your display screen, pull context from messages and photographs, and full multi-step duties throughout apps. By all accounts, it is the largest change to the assistant because it launched.

That is the declare. Here is what’s truly reported.

The redesigned Siri, internally codenamed Campos, is deliberate because the headline function of iOS 27, iPadOS 27, and macOS 27, with Apple in any other case specializing in bug fixes and efficiency enhancements, MacRumors reported 4 months in the past. Apple plans to introduce the brand new Siri at WWDC on June 8, lower than 4 weeks from now, after which iOS 27 developer testing begins. The overhaul follows Apple’s acknowledged failure to ship the AI-powered Siri enhancements it promised for iOS 18 in 2024, options that have been pulled as a result of they did not work reliably sufficient, as Ars Technica confirmed 4 months in the past.

What makes this value inspecting earlier than WWDC is the hole it is making an attempt to shut. Not simply towards ChatGPT, however towards the model of Siri Apple already promised and could not ship.

What’s newly reported this week

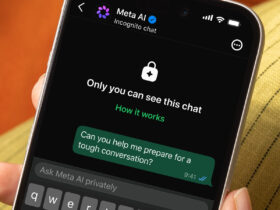

The Might 12 Bloomberg report relayed by MacRumors provides a number of UI particulars that hadn’t surfaced earlier than. A brand new “Search or Ask” interface, triggered by swiping down from the highest middle of any display screen, is anticipated to exchange Highlight as the first search entry level. Siri will now additionally stay within the Dynamic Island: a pill-shaped animation seems whereas a request processes, then expands right into a translucent outcomes card. That card will be swiped right into a full dialog view, populated with contextual playing cards for climate, notes, and upcoming appointments.

The devoted Siri app can also be newly confirmed this week a direct reversal of Bloomberg’s personal earlier place that no such app was deliberate, per MacRumors seven weeks in the past. Apple additionally plans to let customers set third-party AI providers because the default for Apple Intelligence options together with Writing Instruments and Picture Playground, a platform-control loosening first reported this week.

The sooner reporting from January and March established the muse: Campos as the interior codename, the customized Gemini-based mannequin, cross-app actions, onscreen consciousness, and integration into Apple’s first-party apps. This week’s report layers the visible design and particular UI patterns on high.

What the brand new Siri can do this the present one can’t

Probably the most consequential adjustments are purposeful, not beauty. The brand new Siri is being rebuilt on a big language mannequin basis, particularly a customized mannequin based mostly on Google’s Gemini, confirmed by a multi-year partnership Apple introduced 4 months in the past.

Apple did not disclose the monetary phrases, however Gurman reported Apple would pay Google about $1 billion a yr for mannequin entry, based on Ars Technica. Queries will run on Apple’s Personal Cloud Compute servers, protecting consumer knowledge off Google’s infrastructure. The brand new Siri is anticipated to deal with open-ended questions that draw on net outcomes and return visually wealthy responses with bullet factors and enormous pictures, MacRumors reported this week. It can additionally analyze uploaded paperwork and photographs, summarize content material from inside Images, Mail, Messages, and Music, and skim what’s at present on the consumer’s display screen to provide context-aware solutions, per MacRumors’ earlier reporting.

The larger shift is that Siri is meant to behave, not simply reply. The assistant is anticipated to course of a number of actions in a single request asking it to show off the bed room lights and modify the thermostat can be dealt with as one command quite than two, 9to5Mac noted six weeks in the past. ChatGPT and Gemini can reply questions on sensible residence units. Neither can management them from contained in the OS and not using a separate app handoff.

No standalone chatbot has simultaneous entry to what’s in your display screen, your private app knowledge, and the power to take motion throughout the working system. That mixture LLM functionality plus system-level entry is the structural argument for why the iOS 27 Siri redesign is one thing totally different from a better voice assistant.

The place the iOS 27 Siri chat interface seems

As a result of the brand new Siri is a system layer quite than a single function, it surfaces in a number of locations without delay. Understanding the place issues for judging whether or not this represents a real behavioral shift for iPhone customers.

System-wide presence

The “Search or Ask” interface replaces Highlight as the first search entry level, anticipated to show extra superior outcomes and pull deeper knowledge from inside apps, MacRumors reported this week. Siri Ideas can even acquire higher entry to non-public consumer knowledge to floor extra related prompts, per MacRumors’ March reporting. Apple is reportedly testing “Ask Siri” buttons in third-party app menus, letting customers ship app content material on to Siri alongside a request, and a “Write with Siri” choice within the iOS keyboard, 9to5Mac reported six weeks in the past.

The Dynamic Island is the place the redesign turns into most seen. When activated by way of wake phrase or facet button, a pill-shaped animation seems whereas Siri processes a request, then expands right into a translucent outcomes card. Longer requests full within the background whereas the consumer continues utilizing the cellphone usually, per 9to5Mac.

The devoted Siri app on iPhone

For the primary time, Siri will exist as a standalone House Display screen app. The principle interface will show prior conversations as a grid of rounded rectangles with textual content previews, a search bar, and a “+” button for brand new classes. Customers will be capable to pin favourite chats, save older conversations, and search throughout interactions, 9to5Mac reported. The dialog view resembles a Messages thread: chat bubbles, a textual content entry area, a voice toggle, and the power to add paperwork and photographs for evaluation, per MacRumors’ March reporting. Swiping on a Dynamic Island outcomes card opens this identical dialog mode, populated with these contextual playing cards for climate, notes, and appointments.

The 2-track design ambient system presence plus a persistent app places Siri in additional entry factors than earlier than. Whether or not that adjustments how individuals truly use their telephones is dependent upon whether or not the underlying functionality earns sustained use, which is a separate query from whether or not the interface is well-designed.

The iOS 27 redesign additionally displays an structure shift that faces outward. Apple is not insisting Siri deal with every little thing itself.

The prevailing ChatGPT integration can be prolonged by way of an expanded “Extensions” system to help Claude, Gemini, and different third-party fashions. Customers will be capable to swap between AI suppliers instantly from the system search bar, with Siri because the default, Bloomberg reported seven weeks in the past. Apple additionally plans to let customers set third-party AI providers because the default for Apple Intelligence options together with Writing Instruments loosening platform management the corporate has traditionally saved tight, MacRumors reported this week.

The user-facing consequence is that the selection of AI mannequin turns into a settings determination quite than one thing Apple decides for you. Siri stays the routing layer, the factor you invoke first, but it surely not must be the factor that solutions.

On privateness, Apple has drawn a transparent distinction: working Gemini on Personal Cloud Compute means queries do not attain Google’s methods instantly. The corporate has additionally acknowledged its longer-term purpose is to exchange third-party fashions with its personal, suggesting the present Gemini association is a method to an finish quite than a settled technique, as Ars Technica famous 4 months in the past. Whether or not Apple holds its default place over time will rely upon whether or not Siri’s personal capabilities hold tempo with its rivals.

What we nonetheless do not know forward of WWDC

A lot of the reporting above describes options Apple is testing or planning. The June 8 keynote would be the first second Apple has to show these capabilities working in observe, and several other essential questions stay open.

- Which particular options ship with iOS 27’s preliminary launch versus later level updates has not been confirmed. Apple used staggered rollouts for Apple Intelligence in iOS 18 and iOS 26; present reporting does not handle whether or not the identical sample applies right here.

- {Hardware} compatibility for the Dynamic Island-centric interface has not been addressed in any reporting. Customers on older iPhones with out the Dynamic Island could get a meaningfully totally different expertise, however no particulars have surfaced on what that appears like.

- Developer API entry how third-party apps combine with the brand new Siri, what permissions are required, how consumer consent works stays largely unaddressed.

- The declare that the brand new Siri will compete with ChatGPT and Claude is Apple’s framing, relayed by reporting. No impartial benchmarking or stay demo proof exists but.

The credibility take a look at

Apple heads into WWDC carrying actual credibility debt. The private context data, onscreen consciousness, and cross-app motion options it introduced for iOS 18 in 2024 have been pulled as a result of they did not work reliably sufficient, as Ars Technica confirmed 4 months in the past. Those self same classes of functionality are actually central to the iOS 27 pitch, per 9to5Mac.

The keynote ought to be evaluated on particular proof factors. Does Apple show onscreen consciousness working stay, not in a scripted video? Do cross-app actions execute with out seen failures? Are the options proven confirmed for day-one launch, or described as “coming later”? These distinctions matter greater than the design of the app or the size of the function record.

If the brand new Siri ships as described, it will provide one thing no standalone chatbot at present does: LLM-level functionality mixed with system-level entry to non-public knowledge, onscreen context, and OS actions, working throughout search, the keyboard, app menus, and a persistent app. If it does not ship full, or ships and underperforms, the window for Apple to reestablish Siri as a severe AI assistant will get considerably narrower.

The ambition is evident from the reporting. What June 9 will reveal is whether or not any of it truly works.