Might 14, 2026

Story

Embedded software program growth faces many challenges. Groups are beneath stress to construct more and more refined methods in much less time. A lot of them contain extra advanced and fewer predictable behaviors due to the inclusion of options that harness machine studying and synthetic intelligence (AI). However, not like counterparts in enterprise software program growth, embedded methods want to satisfy stringent security, safety, and reliability necessities.

Nonetheless, embedded groups are likely to method rising practices resembling AI-assisted growth with warning. Ideas like “vibe coding,” the place builders iteratively immediate AI to generate code, could also be gaining consideration, however they’re met with comprehensible skepticism in safety-critical environments.

Analysis has repeatedly proven that the later a defect is found, the costlier it’s to repair. For embedded methods, this problem is compounded by growing software program complexity. Whereas the flexibility to replace gadgets after cargo via over-the-air (OTA) updates has turn into important for addressing defects and safety vulnerabilities, the first focus for embedded groups stays on stopping these points earlier within the lifecycle. That is driving higher emphasis on growth and testing practices that may determine and resolve issues earlier than deployment, the place the price, danger, and influence are considerably larger.

A serious aspect in reconciling the seemingly disparate wants for growth pace, flexibility, and security in embedded methods is to undertake a shift-left technique primarily based on continuous-integration practices. This technique promotes the usage of testing and verification as early as potential within the stream. Builders don’t simply write code on this setting—they construct unit assessments that verify whether or not modules fulfil their targets. A deal with early integration reduces the variety of bugs discovered late within the cycle.

The higher deal with testing gives alternatives for embedded builders to take advantage of the productiveness enhancements that generative AI brings. Practices resembling vibe coding, during which programmers categorical and tune their necessities in successive prompts, have captured a lot of the publicity round AI code technology. Builders within the embedded house will naturally be skeptical of approaches like this. However goal-driven AI is usually a highly effective device in accelerating the technology of right code.

One of many challenges builders face with AI coding assistants isn’t merely that the generated code could also be incorrect. That is already mitigated via established practices resembling unit testing and assessment, very like code written by much less skilled builders. The true problem is scale. AI can generate giant volumes of code in a short time and validating that output turns into considerably extra demanding.

AI-generated code typically appears to be like right however nonetheless requires rework. Greater than 70% of builders report rewriting or refactoring AI-generated code earlier than manufacturing use. In embedded methods, these gaps are extra crucial, the place undetected defects can have an effect on security and reliability.

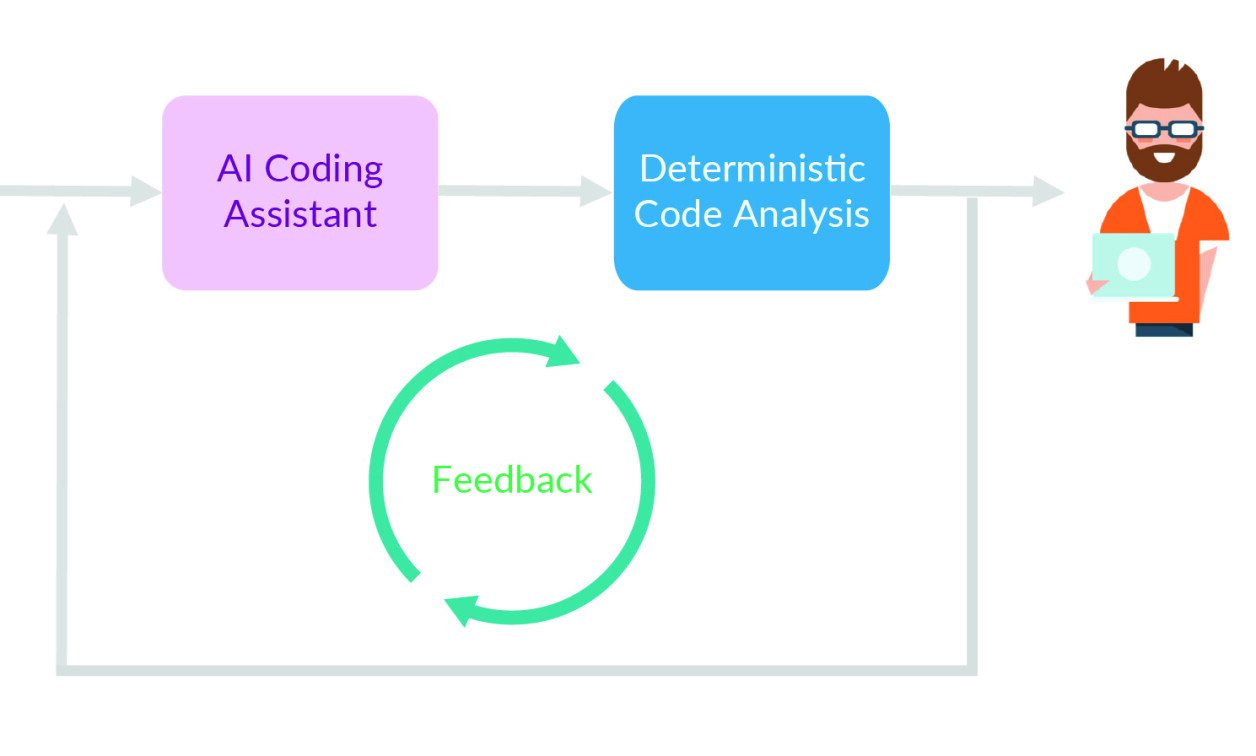

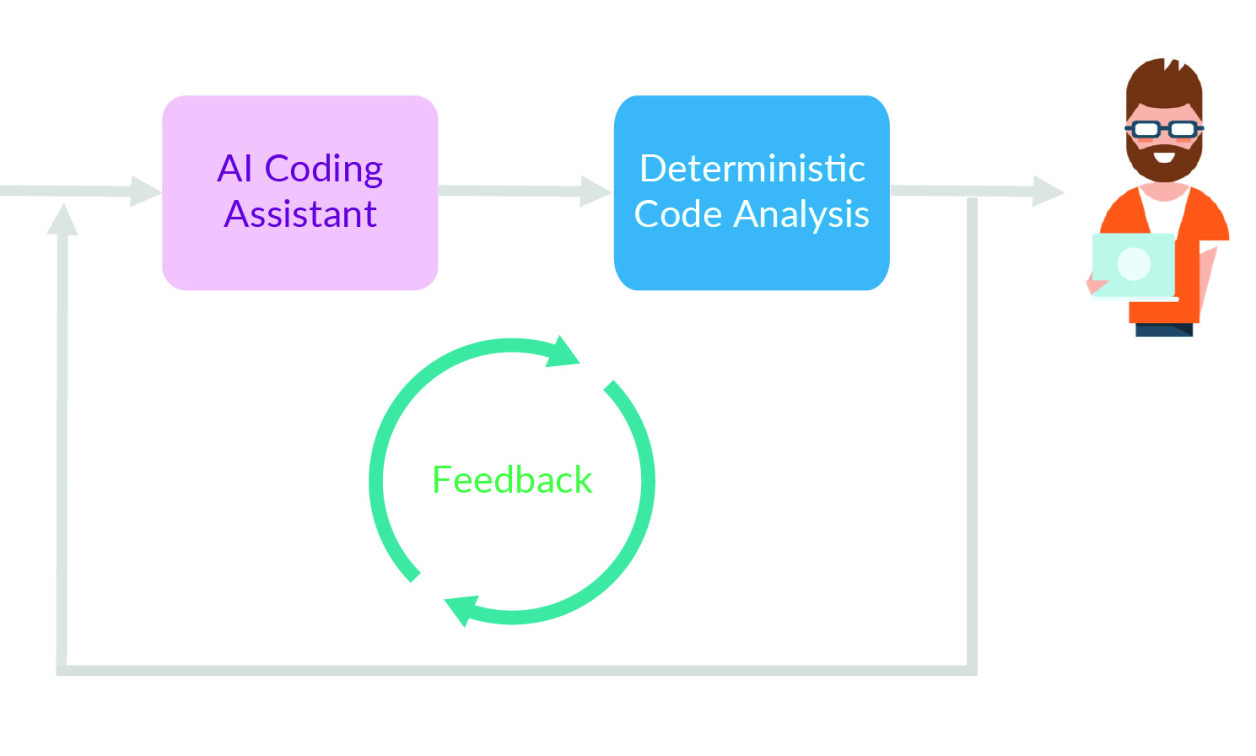

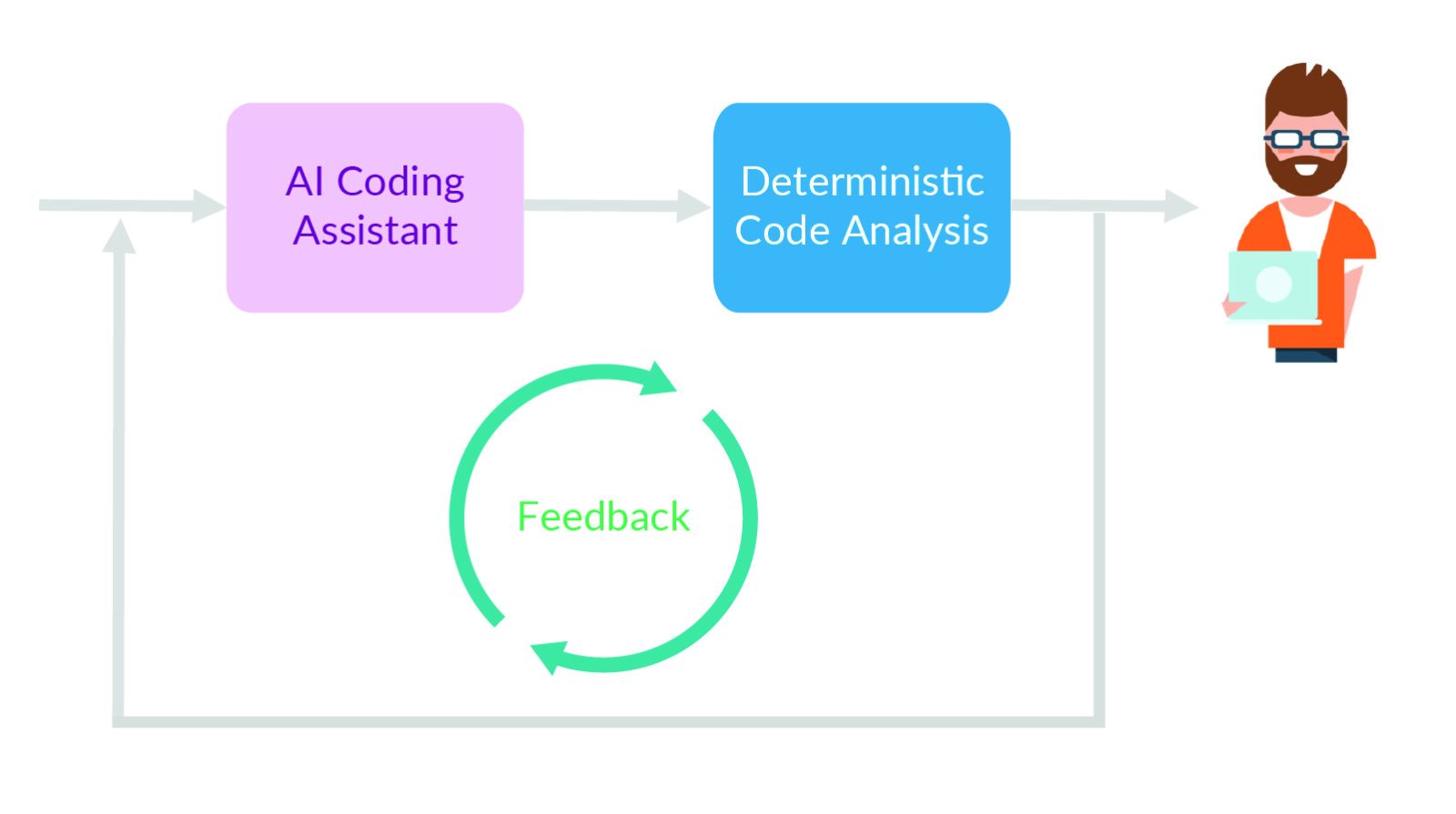

Hybrid of AI and deterministic code evaluation

Excessive-volume manufacturing of robotically generated code locations higher stress on builders. They face the issue of manually inspecting many strains of code which will conceal lurking issues. The AI might generate lots of or 1000’s of strains of code every day. However the builders can not preserve tempo with the speed of technology and preserve enough high quality utilizing conventional code assessment practices alone.

For embedded methods, the dangers improve dramatically. A crash brought on by defective logic is inconvenient in a client app. In a system controlling motors, brakes, medical gadgets, or industrial tools, it might probably create a severe security hazard. A test-driven framework constructed for the shift-left setting will massively scale back the chance of code generated from AI prompts. And it gives builders with extra time to verify the code logic.

Crucially, growth groups stay accountable for which elements of the undertaking are automated by AI. These choices can evolve over time as groups achieve confidence within the real-world efficiency of the instruments.

The important thing piece of the puzzle is to embrace steady integration practices the place builders use system specs to create unit and integration assessments in live performance with the software program itself. In a shift-left setting, the emphasis is on constructing assessments that simplify integration and verify performance for correctness as extra of the appliance is checked into the codebase. As soon as assessments are written, the continual integration harness can run them each time there’s a change within the software program to make sure it has not launched any bugs.

When assessments are constructed immediately from necessities, the first concern turns into whether or not the code behaves appropriately and may be verified, no matter whether or not it was written by a developer or generated by AI. Nonetheless, the supply of the code nonetheless issues for traceability, assessment, and certification. Operating AI-generated code towards these assessments helps guarantee it’s match for goal. Human assessment can then place higher emphasis on structure, maintainability, effectivity, and useful resource utilization, whereas nonetheless confirming correctness and compliance. The AI may be given extra refined prompts to enhance correctness, efficiency, and total code high quality throughout these areas.

Assessments which might be complementary to people who verify performance are equally essential. It’s straightforward for safety vulnerabilities resembling buffer overflows or poor reminiscence utilization practices to sneak into code. Equally, conformance to coding types will enhance total code security, safety, and reliability. That is the place the usage of static evaluation is essential.

At present, static evaluation can deal with excess of conformance with coding types, resembling MISRA, CERT, or AUTOSAR C++14. By performing management stream and information stream evaluation, static evaluation can determine reminiscence leaks, potential information corruption, unsafe reminiscence utilization, race circumstances, and customary safety vulnerabilities resembling buffer overflows and injection flaws.

By working static evaluation and unit testing on every code replace, the code generated by AI may be pushed to a a lot larger stage of high quality than is feasible utilizing a coding assistant by itself. As AI turns into extra ingrained in growth, static evaluation and test-driven validation turn into the guardrails that allow groups to construct belief in AI-generated code.

MCP instruments, enriching AI capabilities

In impact, these high quality assurance (QA) practices, notably static evaluation and test-driven validation, turn into the guardrails for AI. The extra code AI generates, the extra invaluable these automated approaches turn into. Over time, growth groups will achieve confidence in what generative AI can obtain.

This confidence can lengthen to serving to construct the assessments themselves. Generative AI can take as a part of its immediate enter the specs and necessities that builders would use to construct unit and integration assessments. Handbook inspection will nonetheless be essential in key unit assessments. However the usage of code technology can pace up preliminary creation. These assessments may be iteratively refined by feeding the outcomes of take a look at execution and human assessment again into the AI, permitting each the assessments and the underlying code to enhance over time.

There may even be conditions the place automated take a look at technology can enhance total high quality extra shortly than is feasible utilizing handbook strategies. Code protection evaluation is a key a part of any undertaking that includes high-criticality software program. AI can analyze which elements of the appliance stay uncovered and generate new take a look at instances that train these features extra totally. This will help groups fulfill demanding structural protection targets, together with assertion, department, and MC/DC protection.

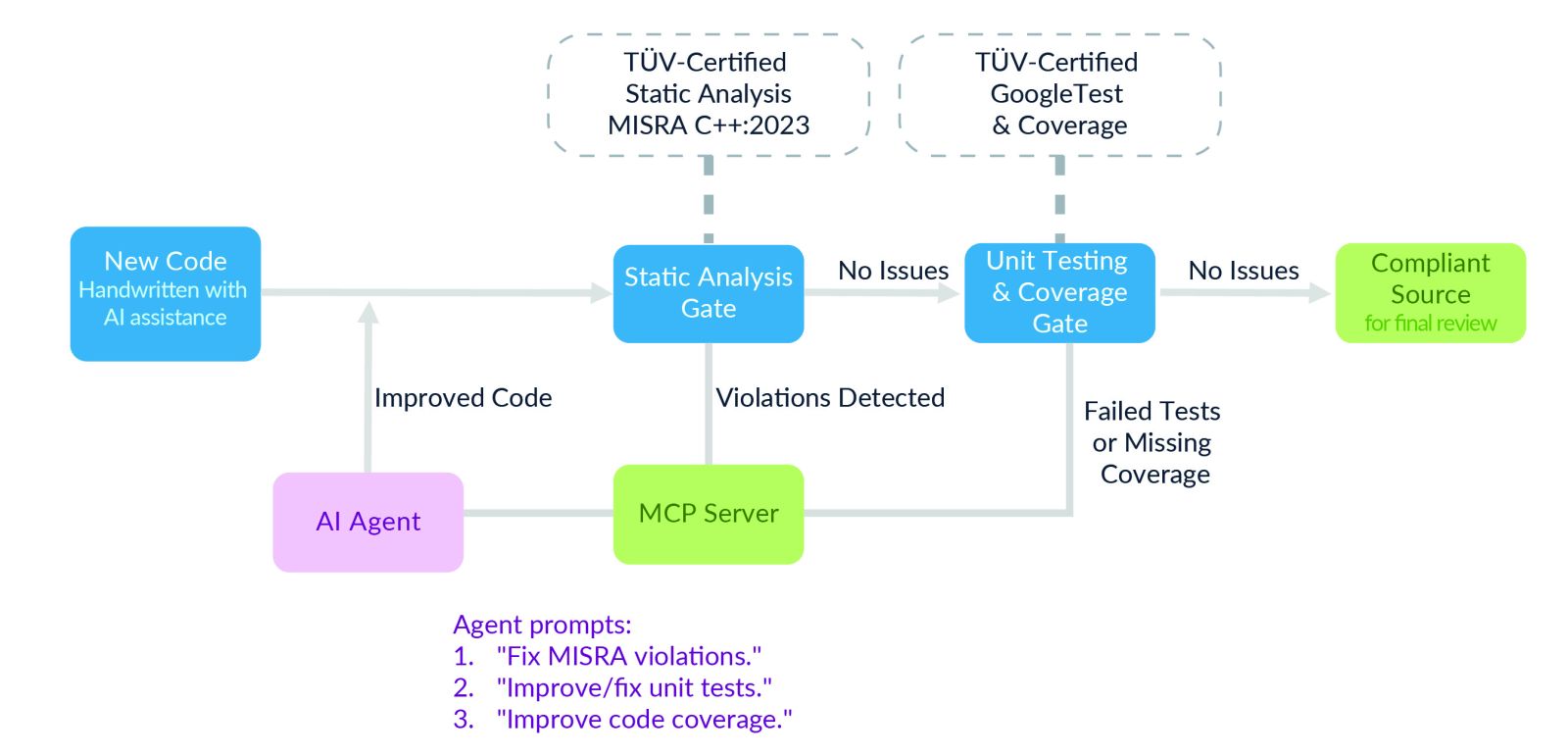

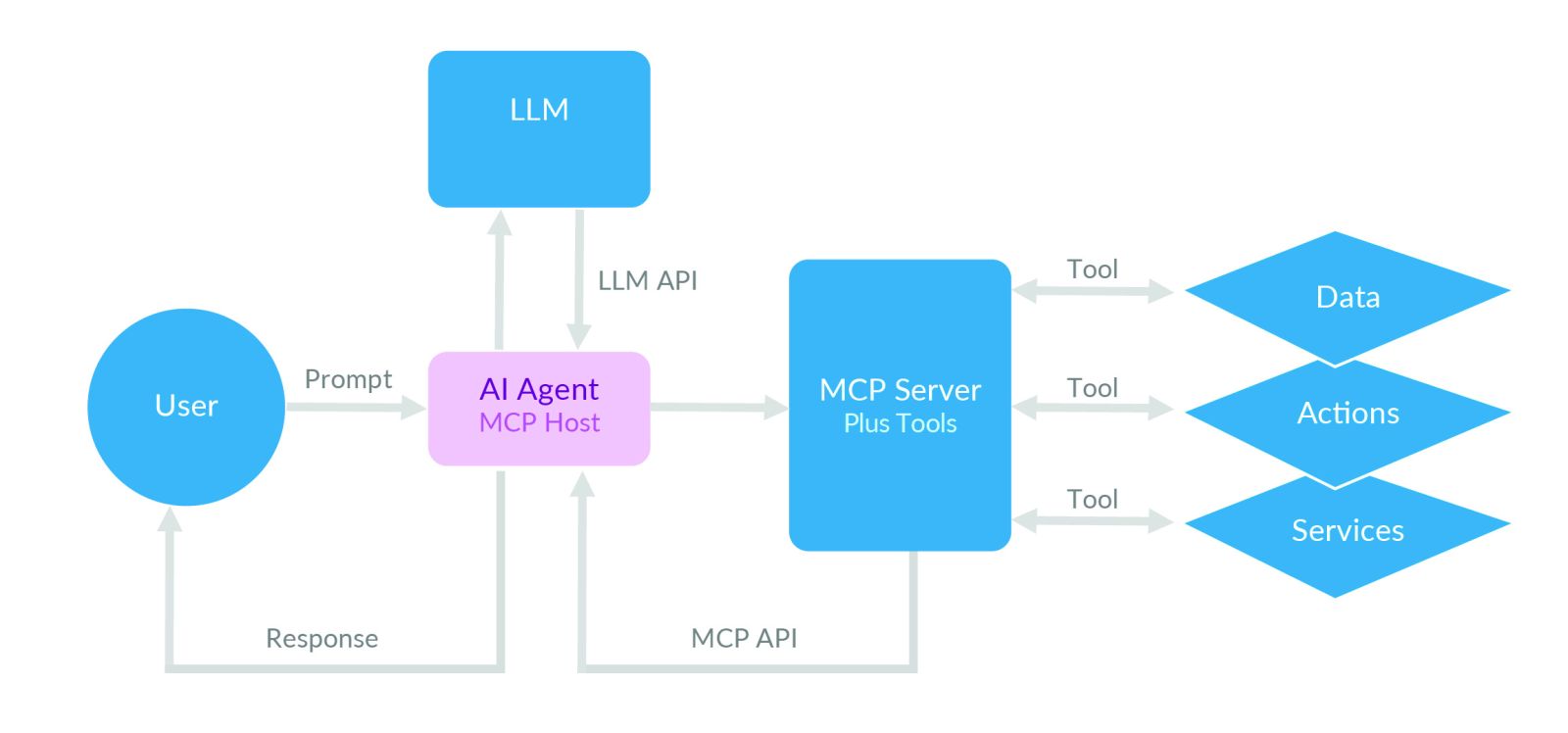

Some organizations are starting to discover multi-agent workflows, the place completely different AI brokers specialise in duties resembling code technology, remediation of static-analysis violations, take a look at creation, and protection enchancment. In safety-critical embedded growth, nevertheless, these workflows are sometimes constrained and stay beneath human supervision.

By way of mechanisms such because the Mannequin Context Protocol (MCP), software program brokers can invoke static evaluation, unit testing, and protection instruments as a part of the event course of. MCP gives the structured contextual data wanted to make sure that the brokers function inside outlined boundaries and act on related information.

For instance, an MCP server can expose static evaluation violations, protection gaps, and necessities traceability data on to an AI agent. Reasonably than producing arbitrary code, the agent can use that context to suggest fixes, create extra assessments, or enhance assertion, department, and MC/DC protection. Builders nonetheless assessment and approve the outcomes earlier than modifications are dedicated.

Generative AI can even help in figuring out the causes of issues discovered within the discipline. By analyzing logs, stack traces, and telemetry, AI will help determine doubtless causes, spotlight gaps in take a look at protection, and speed up the verification of fixes earlier than they’re delivered via an OTA replace.

Over time, these methods might result in higher ranges of automation. However in safety-critical embedded methods, the secret’s not totally autonomous AI. It’s combining AI with static evaluation, testing, protection, and human oversight to create a sooner, safer, and extra managed growth course of.