The world thought Google was caught off guard by the AI revolution. It was not. And the chips it has been quietly constructing since 2016 could also be about to vary every thing.

Introduction: When everybody wrote google off

November 2022 was a humbling month to be Google. OpenAI had simply launched ChatGPT to the general public, and the world misplaced its thoughts. Inside days, it was clear that one thing basically completely different had arrived: a conversational AI that would write, motive, clarify and interact in a approach that felt surprisingly human. The tech press declared a brand new period; And nearly everybody agreed on one factor: Google – the corporate that had primarily invented the trendy web, dominated how the world finds info for 20 years and referred to as itself an AI-first firm – had been caught sleeping.What’s ironic was that Google had the researchers, the information and the infrastructure, but it was second to an organization that most individuals didn’t hear about. Google had revealed a tutorial paper in 2017 that launched the Transformer structure that made ChatGPT doable within the first place. And but right here was a scrappy startup, backed by Microsoft’s $1 billion, doing what Google had apparently been too cautious or too comfy to do: ship a product that confirmed atypical folks what AI might truly really feel like.

Inside Google’s places of work, the interior alarm was actual and stories mentioned that “Code Purple” was declared. Co-founders Larry Page and Sergey Brin reportedly checked again in, and the corporate accelerated the event of Bard, its personal AI chatbot, and commenced fast-tracking AI options throughout its product suite. From the skin, it seemed like panic. Like an enormous scrambling to catch up.Google stumbled with its preliminary launch. It made Bard from scratch and launched Gemini. No one talked about on the time what Google had been quietly constructing for practically a decade beneath all of it. Google grew to become assured, and CEO Sundar Pichai gave a touch of what was brewing behind the scenes.In a latest interview, Google CEO Sundar Pichai pulled again the curtain on how the corporate truly skilled that second, and his description sounds nothing like an organization in disaster.Pichai mentioned in an interview: It was clearly very invert-focused in that second. To me, it was very clear in that second, “Hey, the Overton window shifted.” I felt like the corporate was constructed for that second. The vertical factor, it is not an accident or one thing. It was a really intentful. We had been within the seventh model of TPUs. I keep in mind it may need been 2016 Google I/O the place we introduced the TPUs and spoke about we’re constructing AI information facilities. This was 2016. The corporate was working in an AI-first approach. We had deeply internalized this shift. To me, we had been behind when it comes to frontier LLM fashions, however we had all of the capabilities internally, and we needed to execute to fulfill the second. However the thrilling half was after I have a look at it from a full stack, we had the analysis groups, we had the infrastructure groups, we had all of the platforms.The capabilities Pichai was referring to included the analysis groups, the infrastructure, the platforms. However an important one was the one that nearly nobody outdoors the corporate absolutely understood on the time: The chip.

Half I: The Chip Sport

Whereas the remainder of the tech world was shopping for Nvidia, Google was enjoying a special recreation all alongside. Nvidia’s GPU chips grew to become the important {hardware} of the trendy AI period and so they proved to be the picks and shovels of the gold rush. Demand for Nvidia’s H100 chips skyrocketed, outstripping provide so dramatically that entry to them grew to become a aggressive benefit in its personal proper. As Corporations queued up, costs soared and Nvidia’s market capitalisation crossed a trillion {dollars}, then two trillion, then briefly touched three. Jensen Huang grew to become probably the most celebrated CEOs on the planet. Nvidia was not only a chip firm anymore. It was the infrastructure layer that your entire AI business was constructed on. Quickly, it grew to become the primary firm to succeed in the $4 trillion market cap.

Google, in the meantime, had been doing one thing completely different since 2016. TPUs, or Tensor Processing Models, are chips designed by Google particularly for the form of mathematical operations that AI fashions require. In contrast to Nvidia’s GPUs, that are general-purpose processors tailored for AI workloads, TPUs are purpose-built from the bottom up for one factor: working neural networks effectively.The primary technology was introduced at Google I/O in 2016, nearly as a footnote in a broader AI presentation. Few folks outdoors the business paid a lot consideration. However Google saved constructing. By the point ChatGPT modified the world in late 2022, Google was already on its seventh technology of TPUs with years of iterative improvement, architectural refinement and hard-won engineering expertise that no amount of cash might merely purchase in a single day.What this meant, in sensible phrases, was that when Google’s most subtle AI fashions lastly arrived within the type of Gemini, Gemini Nano, Gemini Professional and Gemini Extremely, and the successive variations that adopted weren’t simply succesful, they had been quick, correct and updated. They usually ran on infrastructure that Google owned, managed and had been perfecting for practically a decade. The remainder of the business had been constructing on rented land whereas Google had been quietly laying its personal basis the entire time.

Half II: The Inference drawback

For some time, the AI dialog was dominated by one metric: how large is your mannequin, and the way properly does it carry out on benchmarks. Coaching: the method of feeding huge quantities of information to an AI mannequin in order that it learns patterns, relationships and reasoning capabilities, was handled as the first problem. Furthermore, all the businesses competed on the dimensions of their coaching runs, the sophistication of their architectures, and their efficiency on standardised checks. A greater-trained mannequin meant a better AI. And a better AI meant successful.What the business slowly and considerably painfully found is that coaching is simply half the issue. The opposite half is inference: the method of truly working the mannequin in actual time to reply a person’s query.

In simpler phrases, once you kind one thing into ChatGPT or Google’s Gemini and obtain a response, that response is being generated via inference: the mannequin is processing your enter and producing an output, in actual time, at scale, for doubtlessly hundreds of thousands of customers concurrently.What it meant at the moment was that Inference was exhausting, computationally intensive, time-sensitive and costly. A mannequin that produces sensible solutions however takes thirty seconds to generate them will not be helpful in a shopper product. Customers anticipate responses in seconds. The chip that permits quick, environment friendly inference is subsequently turns into a vital cog.That is the place Google’s TPUs grew to become a real aggressive weapon. Google used Nvidia’s GPUs for coaching however used TPUs, significantly well-suited to inference workloads, to generate fast solutions. Their structure, purpose-built for the matrix multiplication operations that underpin neural community computation, permits them to course of inference requests with a velocity and effectivity that general-purpose GPUs wrestle to match at scale. Google’s fashions, working on Google’s TPUs, in Google’s information centres, delivered responses on Google’s merchandise with a velocity that began turning heads.After which Google did one thing that nobody had fairly anticipated: it opened the doorways. Reasonably than maintaining its TPU infrastructure solely for inner use, Google started providing entry to its chips via Google Cloud, permitting exterior corporations, together with startups, enterprises, AI labs, to hire TPU capability for their very own workloads. A chunk of {hardware} that had been constructed to provide Google an inner benefit was now a business product. The chip had develop into a enterprise – and Google Cloud got here out to be one other quickest rising vertical for Google.

Half III: The day Google scared Nvidia

The second that crystallised simply how critical the TPU menace had develop into arrived with out a lot warning. Studies emerged that Meta, one of many largest shoppers of AI computing infrastructure on the earth and an organization that had been one in all Nvidia’s most vital clients, had signed a take care of Google to make use of TPUs for sure workloads.The market reacted instantly. Nvidia’s share value dropped and billions of {dollars} in market capitalisation evaporated in a single day. The sign was clear: if Meta was diversifying away from Nvidia towards Google’s customized silicon, the idea that Nvidia had a everlasting lock on AI infrastructure was not secure.Nvidia pushed again, and it did so loudly. It introduced in a publish on X (previously Twitter).“We’re delighted by Google’s success — they’ve made nice advances in AI and we proceed to produce to Google,” the corporate mentioned in a press release that managed to sound each gracious and dismissive on the similar time. “NVIDIA is a technology forward of the business — it is the one platform that runs each AI mannequin and does it in all places computing is finished. NVIDIA presents better efficiency, versatility, and fungibility than ASICs, that are designed for particular AI frameworks or capabilities,” it added.The reference to ASICs (Software-Particular Built-in Circuits), the class that TPUs fall into, was pointed. Nvidia’s argument was primarily this: sure, purpose-built chips will be very quick on the particular factor they’re designed for. However general-purpose platforms that may run something, wherever, on any framework, are finally extra useful. Versatility beats specialisation.It’s a affordable argument. It is usually, notably, the argument of an organization that felt the bottom shift beneath it.Nvidia additionally made a big strategic transfer round this era, buying Groq, a chip startup that had constructed a popularity for terribly quick inference efficiency. The acquisition was extensively learn as a direct response to the inference problem: if the subsequent aggressive battleground was not coaching however serving fashions shortly and cheaply at scale, Nvidia needed the most effective inference {hardware} in its portfolio.

Half IV: Google strikes again with TPU v8

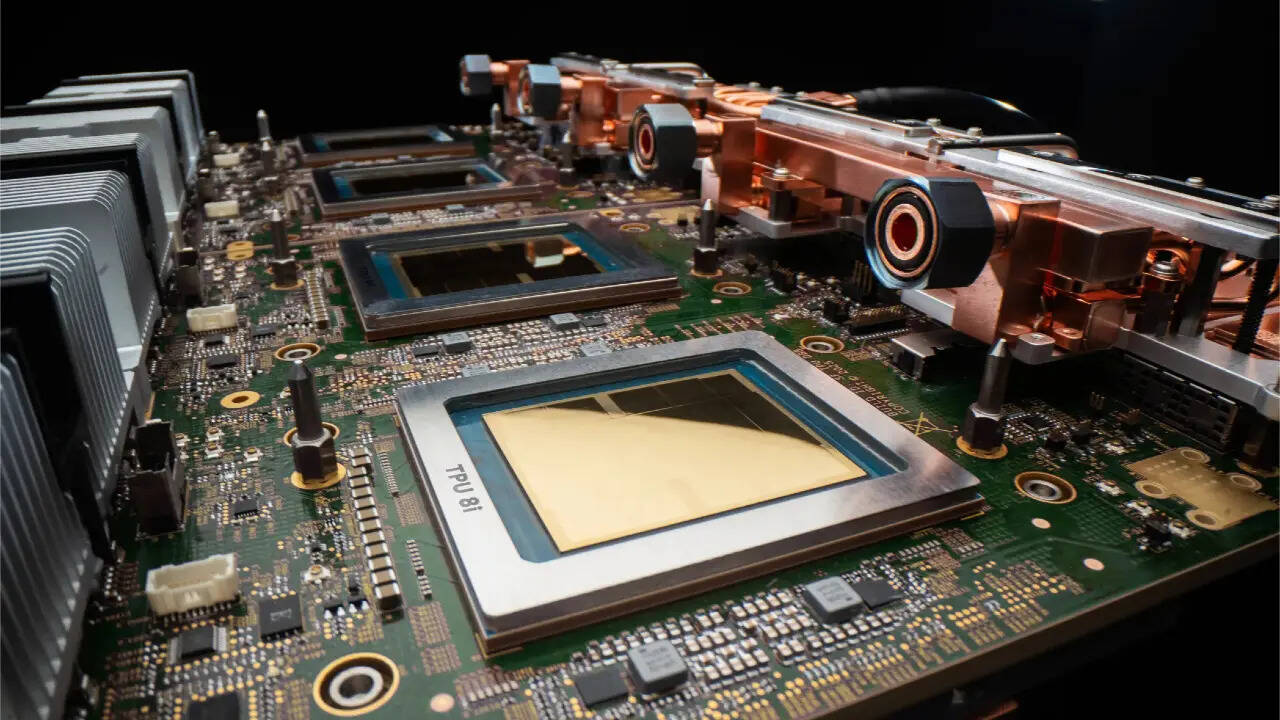

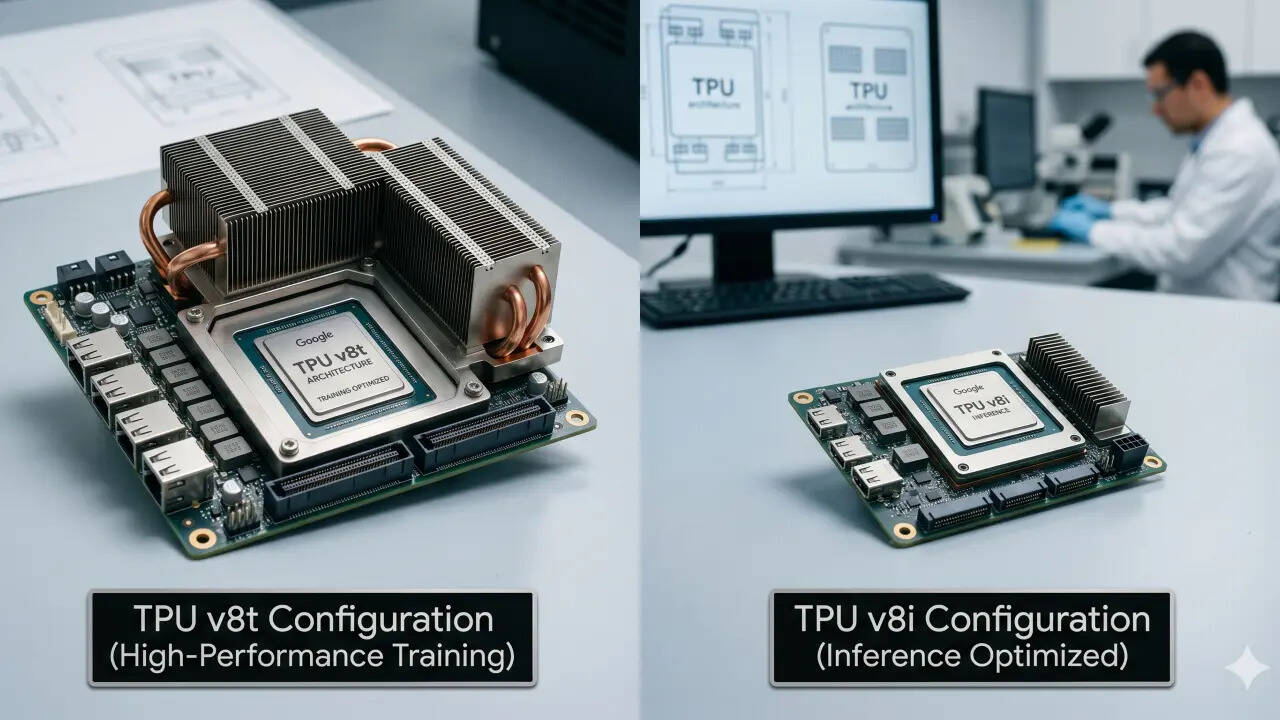

When everybody thought the battle was cooling down, Google’s reply got here within the type of its newest technology of customized silicon, and it was designed to handle either side of the AI compute equation concurrently. The corporate introduced TPU v8 in two distinct configurations, every focusing on a special a part of the AI workload spectrum.

The primary, TPU 8t, is constructed for massive-scale coaching. These chips can deal with coaching – the form of huge, months-long computation required to construct the subsequent technology of frontier AI fashions. It’s Google’s reply to the query of whether or not its customized chips can compete with Nvidia’s greatest {hardware} in relation to the uncooked, sustained energy required to coach fashions on the frontier.The second, TPU 8i, is constructed for one thing completely different: high-performance, low-latency agentic inference. That is the chip designed for a world the place AI isn’t just answering easy questions however working as an autonomous agent, together with planning multi-step duties, executing actions, interacting with exterior programs, and doing all of it shortly sufficient that customers and enterprise programs don’t discover the delay. Collectively, the 2 chips symbolize one thing extra important than a {hardware} replace: They symbolize Google’s clearest articulation but of what it’s attempting to be. Not a search firm with an AI technique. A full-stack AI firm: one which owns the analysis, the fashions, the chips, the information centres, the cloud platform and the buyer merchandise.

Sundar Pichai’s remark in regards to the Overton window is value returning to, as a result of it captures one thing vital about what Google has been doing and what it’s now saying overtly. The Overton window is an idea from political idea that describes the vary of concepts the general public is keen to contemplate acceptable at any given second. Pichai used it to explain the second ChatGPT modified what the world thought AI might and will do. The window shifted. All of the sudden, folks had been prepared for AI in a approach that they had not been earlier than.Google’s argument was implicit in Pichai’s phrases and more and more specific within the firm’s product and {hardware} bulletins: it was by no means behind. It was ready for the window to open. And when it did, it had every thing it wanted: the analysis, the infrastructure, the chips and the fashions.What’s more durable to dispute is the place Google stands now. Its Gemini fashions are aggressive with the most effective on the earth; its TPU infrastructure is attracting clients that Nvidia thought of its personal; its cloud enterprise is rising, and now its chips, that are a number of generations within the making, constructed within the years when no one was paying consideration, are actually on the centre of probably the most profound know-how competitions of the trendy period.