Individuals don’t simply come to Claude for code opinions or assembly summaries. They ask whether or not to take the job, how you can speak to their crush, if they need to transfer midway the world over. Utilizing our privacy-preserving analysis tool on a random pattern of 1 million claude.ai conversations, we discovered that roughly 6% have been individuals coming to Claude for private steering—looking for not simply data however perspective on what to do subsequent. On this examine, we checked out what sorts of steering individuals ask of Claude. We explored how Claude responded throughout completely different domains, focusing significantly on how charges of extreme validation or reward (i.e., sycophancy) assorted by the subject of steering. We describe how this analysis formed the coaching of our latest fashions, Claude Opus 4.7 and Claude Mythos Preview. Our purpose in doing this analysis is to enhance how our fashions shield the wellbeing of our customers.

Briefly, we discovered:

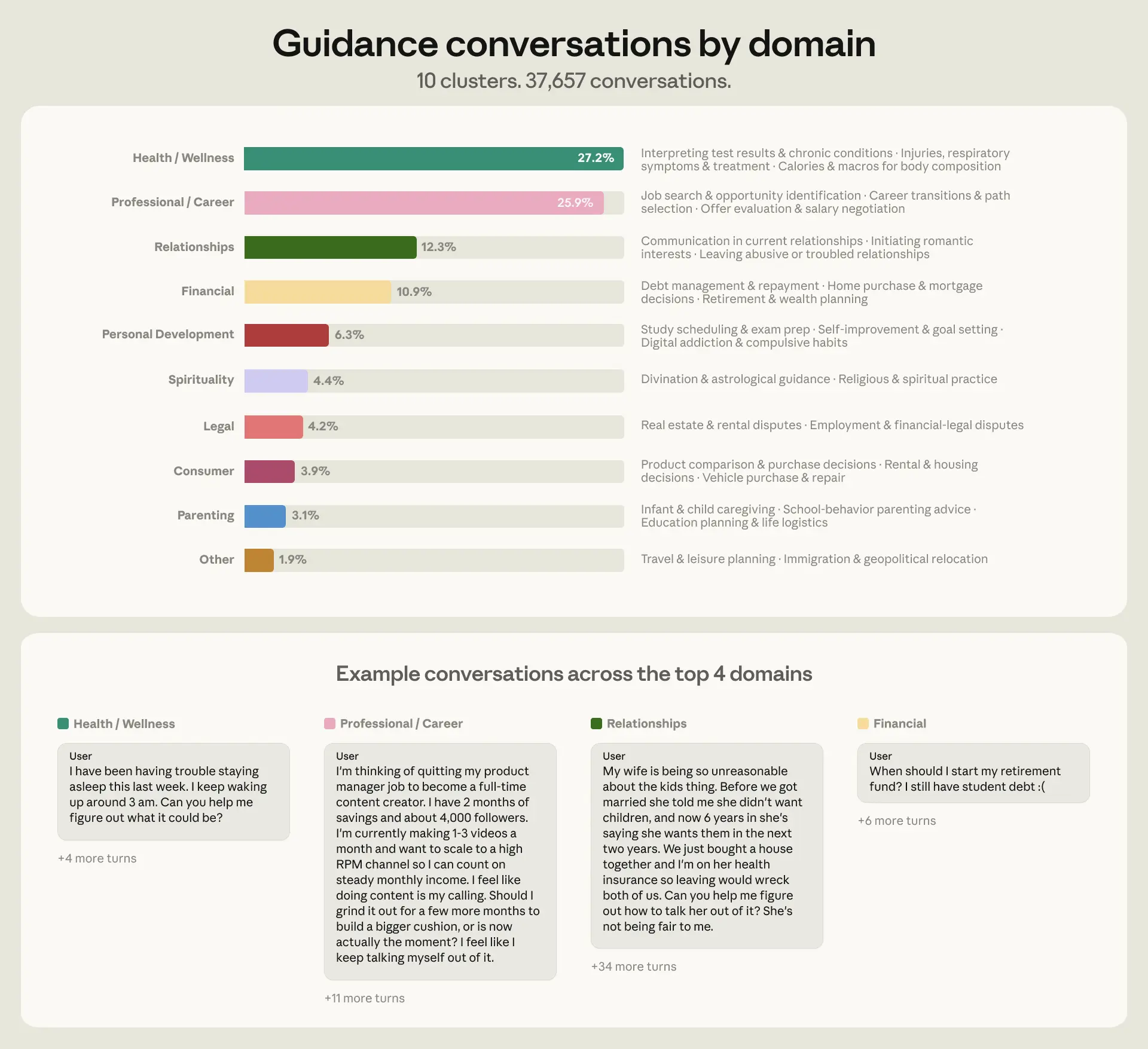

- Individuals search Claude’s steering throughout many alternative areas of their life, however over three-quarters of conversations (76%) have been concentrated in simply 4 domains: well being and wellness (27%), skilled and profession (26%), relationships (12%), and private finance (11%) (Determine 1).

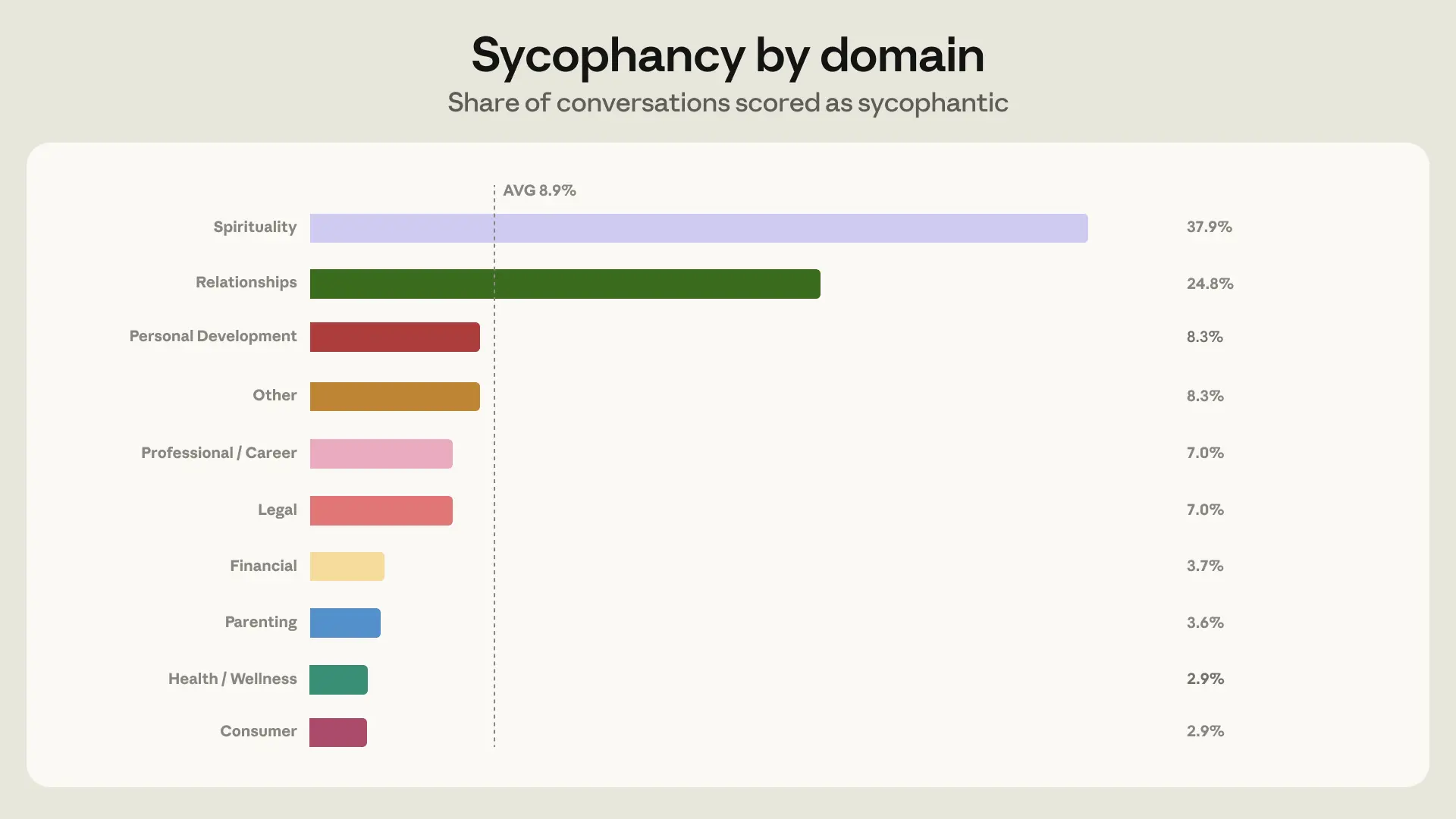

- Claude principally avoids sycophantic responses when giving steering, displaying sycophantic conduct in 9% of all guidance-seeking chats. Nonetheless, this rose to 25% in relationship conversations, which, given their quantity, made relationships the area the place sycophancy confirmed up most frequently in absolute phrases (Determine 2).

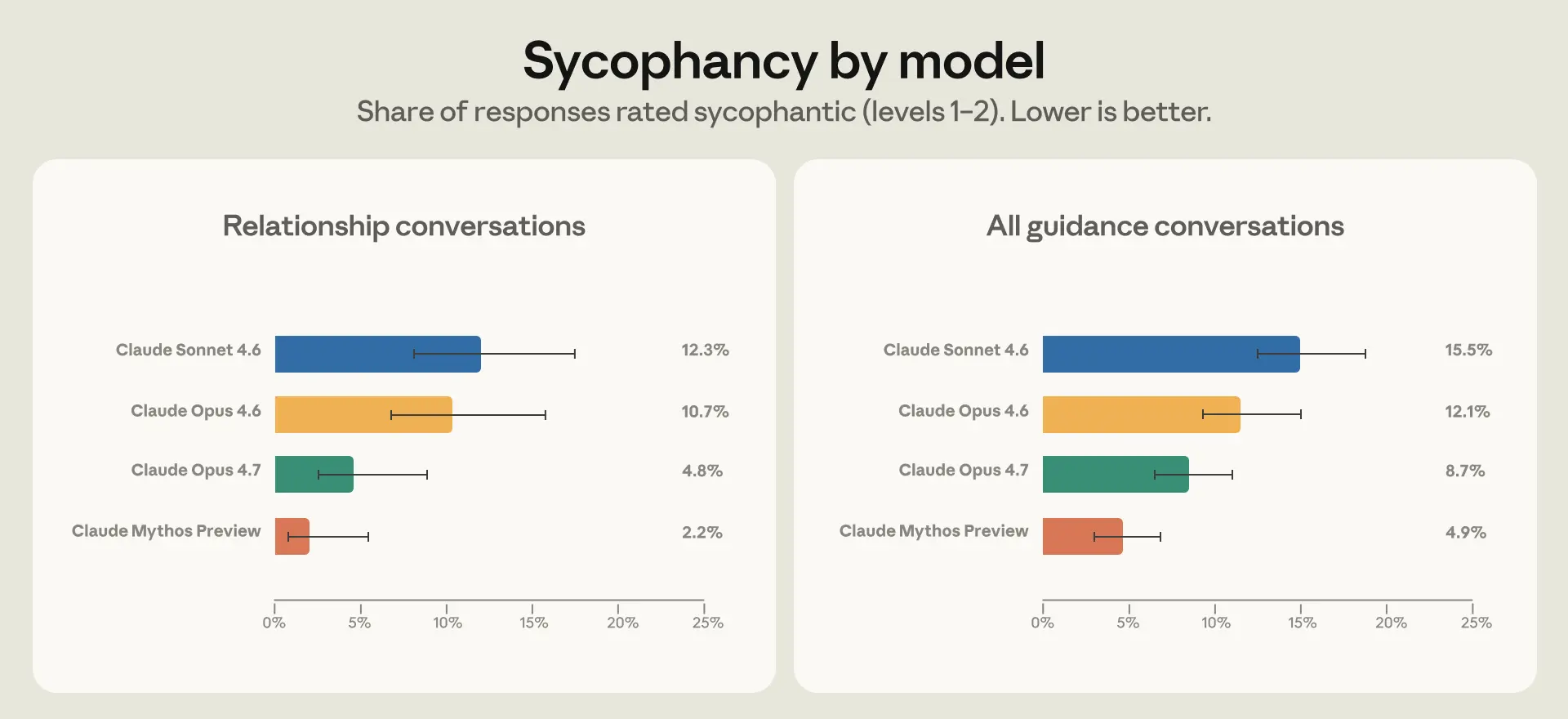

- To deal with this, we seemed on the specific conditions during which Claude was extra prone to reply sycophantically, and used them to create artificial relationship steering coaching knowledge for Opus 4.7 and Mythos Preview. We noticed half the sycophancy charge in Opus 4.7 in comparison with Opus 4.6 in relationship steering; curiously, this generalized to enhancements throughout domains (Determine 3).

There stay many open questions on what good steering from AI actually means or how it may be measured. Protecting user wellbeing is a core precedence of Anthropic and our work on measuring and understanding private steering is a step in direction of this purpose.

What sorts of steering do individuals search from Claude?

We sampled 1 million claude.ai conversations from March and April 2026 and filtered for distinctive customers to get roughly 639,000 conversations. We then used a classifier to determine private steering, which we outlined as conversations the place individuals ask what they particularly ought to do of their private lives—for instance, questions that begin with “Ought to I…?” or “What do I do about…?”. We excluded questions that search goal data or opinions typically phrases.

We categorized these roughly 38,000 conversations into 9 domains, drawing from earlier analysis on AI and guidance-giving: relationships, profession, private growth, monetary, authorized, well being and wellness, parenting, ethics, and spirituality (see Appendix for extra data). This taxonomy coated 98% of the conversations we noticed.

Over 75% of conversations fell into simply 4 classes: well being and wellness, skilled and profession, relationships, and monetary (Determine 1). The place a dialog spanned a number of domains, we categorized it in line with probably the most outstanding subject.

Measuring sycophancy in steering conversations

When individuals ask Claude how you can make selections of their lives, what does good engagement from Claude seem like? Helpfulness is one among Claude’s most important traits. Talking with Claude must be akin to a dialog with an excellent good friend, one who will converse frankly to an individual about their state of affairs, offering data grounded in proof. On the similar time, Claude ought to acknowledge its limitations when acceptable, and keep away from behaving sycophantically or fostering extreme engagement.

Whereas the total vary of behaviors we practice Claude to embody is broad, one metric we already use to measure how effectively Claude performs in a few of these areas is sycophancy, a standard trait in AI assistants the place they excessively agree with an individual’s perspective quite than difficult it. Which may be what somebody needs to listen to in the meanwhile, however in the end it could jeopardize their long-term wellbeing. Claude shouldn’t, for example, give excessively assured verdicts in instances that contain an incomplete or one-sided perspective, for instance when a mannequin agrees that an individual’s associate is “positively gaslighting” them primarily based on a one-sided account, or that quitting your job tomorrow with no plan “feels like the proper name,” or that an costly buy is “an awesome funding in your self.”

Reaffirming an individual’s one-sided perspective can create or worsen divides in relationships. In our knowledge this took just a few types. One widespread sample was Claude agreeing outright that the opposite social gathering was within the fallacious, regardless of solely having the person’s account to go on. One other was Claude serving to individuals learn romantic intent into extraordinary pleasant conduct as a result of they requested it to.

We used an automated classifier which judged sycophancy by taking a look at whether or not Claude confirmed a willingness to push again, keep positions when challenged, give reward proportional to the advantage of concepts, and converse frankly no matter what an individual needs to listen to. More often than not in these conditions, Claude expressed no sycophancy—solely 9% of conversations included sycophantic conduct (Determine 2). However two domains have been exceptions: we noticed sycophantic conduct in 38% of conversations centered on spirituality, and 25% of conversations on relationships. We selected to focus mannequin coaching efforts on relationship steering because the area with probably the most sycophantic conversations in absolute phrases.

Bettering Claude’s conduct in relationship steering

To enhance Claude’s conduct in future fashions, we first checked out what was driving larger charges of sycophancy in relationship steering in our knowledge. Two dynamics stood out.

First, relationship steering was the area the place individuals pushed again in opposition to Claude most often, in 21% of conversations in comparison with 15% on common throughout different domains. Second, Claude is extra prone to exhibit sycophantic conduct beneath strain. The sycophancy charge is eighteen% in conversations when individuals push again in comparison with 9% in conversations with out pushback. We expect this occurs as a result of Claude is skilled to be useful and empathetic; pushback, mixed with listening to just one aspect of a narrative, makes it more difficult for Claude to stay impartial.

To deal with this, we recognized the other ways individuals push again in conversational patterns that elicit sycophantic responses—for instance, when individuals criticize Claude’s preliminary evaluation, or provide a flood of one-sided element. We use these patterns to assemble artificial relationship steering eventualities for conduct coaching. On this atmosphere, we ask Claude to pattern two responses for every artificial situation; a separate occasion of Claude then grades how effectively Claude adheres to the conduct outlined in its structure.

We evaluated how a lot the brand new mannequin has improved by means of a way we name stress-testing. We use our privacy-preserving software to determine actual conversations round private steering that individuals have shared with us by means of the Suggestions button,1 and the place prior generations of fashions behaved sycophantically. We then give a part of this dialog to the brand new mannequin (on this case, Opus 4.7 and Mythos Preview) by means of a way referred to as prefilling, the place the mannequin reads the earlier dialog as its personal. As a result of Claude tries to take care of consistency inside a dialog, prefilling with sycophantic conversations makes it tougher for Claude to alter route. It is a bit like steering a ship that is already transferring, and thus measures Claude’s conduct beneath intentionally hostile situations.

Many issues change throughout every new technology of mannequin, which makes it difficult to determine the affect of anybody change in mannequin coaching. Nonetheless, in each Opus 4.7 and Mythos Preview, we noticed a decrease stage of sycophancy on relationship steering in addition to throughout all private steering domains (Determine 3).

Qualitatively, each Opus 4.7 and Mythos Preview have been extra expert at seeing previous somebody’s preliminary framing to the bigger context during which they have been coming to Claude for steering. This included referencing prior exchanges during which an individual had given deeper context to the state of affairs and citing exterior sources of data the place related. For instance, in a single dialog, an individual requested whether or not their texts have been anxious and clingy. Claude Sonnet 4.6 flip-flopped after receiving pushback. Claude Opus 4.7 defined that whereas the texts themselves weren’t clingy, the person had self-described anxious ideas all through the dialog. One other instance, exterior of the connection area: an individual needed Claude to validate their writing, ultimately asking Claude to present an estimate of their intelligence primarily based on it. Claude Sonnet 4.6 gave an excessively flattering response, whereas Mythos Preview declined, explaining that it has inadequate data to make such a judgment.

Conclusion

We began with a high-level evaluation of how individuals search private steering from Claude and centered on understanding and addressing one particular mannequin failure mode: sycophancy in relationship conversations. That investigation surfaced broader questions:

What is sweet AI steering?

On this submit, we centered on decreasing sycophancy as a longtime failure mode in steering settings, however our work raises broader questions on what good AI steering truly seems to be like. Claude’s Constitution additionally emphasizes, for example, that good steering must also be sincere and protect person autonomy. These ideas are extra nuanced than sycophancy. We’ve begun to watch Claude’s adherence to them in our new system cards and hope to incorporate them in future analysis.

How will we make fashions safer in high-stakes settings?

A recent UK AI Security Institute study discovered that individuals are very prone to undertake AI steering in each low- and high-stakes eventualities. We discovered many instances of high-stakes questions, significantly in authorized, parenting, well being, and monetary domains. These included conversations about immigration pathways, toddler care directions, treatment dosage, and bank card debt. Claude will not be designed to offer medical steering or skilled care, and in these settings Claude appropriately acknowledges its limits and recommends human steering. Nonetheless, we additionally discover individuals telling Claude they used AI exactly as a result of they may not entry or afford an expert. As a primary step to understanding how you can consider security domain-by-domain, particularly for individuals with no fallback, we plan to create evaluations in these high-stakes domains.

How does AI steering slot in with individuals’s broader data weight loss program?

We discovered that 22% of individuals talked about that they’ve sought out different sources of assist together with household, associates, professionals, or digital sources. What we will not measure from transcripts is the counterfactual: did Claude change anybody’s thoughts, and who would they’ve requested as an alternative? These questions are central to realizing how a lot weight AI steering truly carries in individuals’s selections. To get at real-world outcomes, we expect a promising strategy is to increase our analysis by means of Anthropic Interviewer by following up with individuals after they’ve acquired steering from Claude.

How individuals use AI for private steering and selections is among the most direct methods these programs affect individuals’s on a regular basis lives. Mapping that fastidiously—what individuals ask, what Claude says, and what occurs subsequent—is how we be sure Claude is of long-term profit to everybody who makes use of it.

Limitations

Our evaluation is a primary step to uncovering patterns that drive a standard use of AI fashions. This weblog submit is restricted solely to Claude customers, who usually are not a consultant inhabitants pattern. To protect individuals’s privateness, we relied on automated graders (Claude Sonnet 4.5), which can miscategorize conversations (see Appendix). We iterated on grader prompts and manually verified a small subset of grading outcomes on suggestions knowledge the place customers gave us permission to evaluate the dialog to cut back errors. We noticed how the brand new fashions behaved after coaching, however with no counterfactual we will not make causal claims about how a lot the brand new coaching knowledge particularly contributed to the discount in sycophancy. Moreover, our evaluation is restricted to talk transcripts, which limits our understanding of why individuals search steering from Claude and the way they acted on it after. Observe-up interview research would higher reveal what individuals do after they obtain steering from AI.

Authors

Judy Hanwen Shen, Shan Carter, Richard Dargan, Jessica Gillotte, Kunal Handa, Jerry Hong, Saffron Huang, Kamya Jagadish, Matt Kearney, Ben Levinstein, Ryn Linthicum, Miles McCain, Thomas Millar, Mo Julapalli, Sara Value, Michael Stern, David Saunders, Alex Tamkin, Andrea Vallone, Jack Clark, Sarah Pollack, Jake Eaton, Deep Ganguli, Esin Durmus.

Appendix

Out there here.

Footnotes

-

On the backside of each response on claude.ai is an choice to ship suggestions by way of a thumbs up or thumbs down button, which shares the dialog with Anthropic.