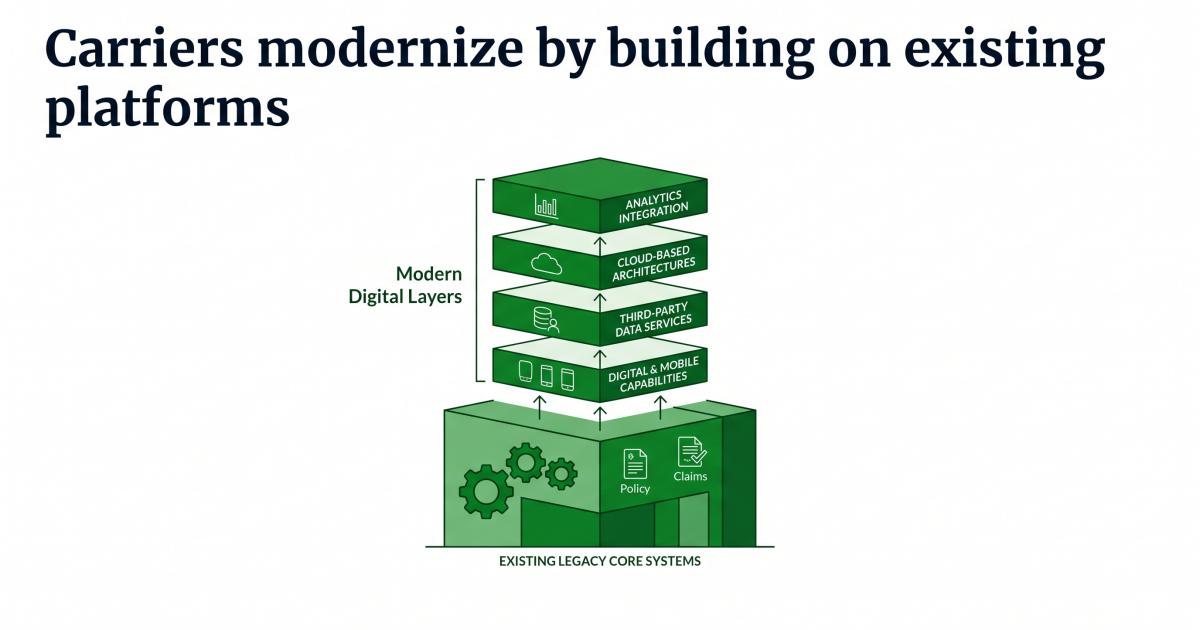

The tempo of technological change and innovation within the property and casualty insurance coverage trade has accelerated dramatically. Carriers are modernizing core techniques, increasing digital and cell capabilities, integrating third-party information companies and analytics, and adopting cloud-based architectures at a scale that was nearly unthinkable even a decade in the past. In actuality, most modernization initiatives construct on present core platforms fairly than ranging from scratch. These platforms have usually developed over a few years, accumulating layers of customization, architectural compromises, and complicated dependencies.

Processing Content material

Software program supply practices, the processes insurers use to design, construct, check, launch, and preserve know-how modifications and integrations, are evolving simply as shortly. As launch cycles shorten and techniques turn out to be extra interconnected, insurers are adopting extra automated, collaborative, and steady approaches to know-how supply and high quality assurance.

Traditionally, high quality assurance was considered as a late-stage exercise, one thing that occurred towards the tip of a venture, typically dealt with by devoted high quality assurance (QA) groups as soon as improvement was full. In the present day, supply cycles are sooner, integrations are extra complicated, and expectations for system stability are larger than ever.

On this surroundings, insurers are shifting past conventional testing approaches towards a broader idea: end-to-end high quality engineering. Quite than treating high quality as a checkpoint, they’re constructing it into each stage of the software program supply lifecycle.

This shift combines early-stage practices resembling AI-powered code overview with lifecycle high quality automation that constantly validates workflows and integrations. Collectively, these practices assist insurers modernize sooner whereas sustaining the consistency and reliability required in a extremely regulated trade.

The evolution of QA in insurance coverage know-how

Historically, QA groups addressed this complexity via structured testing phases. Builders wrote code, QA groups executed check instances, and releases had been accredited solely after in depth handbook validation. Whereas efficient for slower launch cycles, this strategy has turn out to be more and more troublesome to scale in right now’s know-how surroundings.

For CIOs and CTOs, this shift displays a broader management problem: guaranteeing that modernization efforts can transfer sooner with out sacrificing reliability or operational stability. In the present day’s insurance coverage platforms are extremely configurable and deeply built-in with exterior information sources, analytics platforms, customer-facing interfaces, and ecosystem companions. Supply groups now launch updates incrementally fairly than in giant annual cycles, making a steady tempo of change that requires a special strategy to high quality.

Early efforts to modernize QA launched the idea of “shift-left” testing, shifting high quality issues earlier into the event cycle so points might be recognized sooner. AI-powered code overview instruments have helped groups catch issues throughout improvement, enhancing code consistency and decreasing rework later within the course of.

However even with these early-stage enhancements, insurers found one thing vital: high-quality code alone doesn’t assure high-quality system conduct. Complicated workflows, integrations, and enterprise processes nonetheless require scalable validation throughout the supply lifecycle. In giant insurance coverage platforms, many vital incidents are triggered not by remoted code defects, however by interactions between workflows, integrations, information flows, and configuration layers that evolve independently over time.

This realization has pushed the trade towards a broader high quality engineering mindset — one which aligns high quality with the continual supply fashions and modernization methods that know-how leaders are working to realize.

Why insurers are rethinking high quality

A number of forces are pushing insurers towards a brand new strategy to high quality.

First, modernization initiatives demand sooner supply. Enterprise leaders count on know-how groups to launch capabilities constantly fairly than wait for big deployment cycles. High quality assurance can now not be a bottleneck.

Second, insurers are underneath stress to enhance operational effectivity. Handbook regression testing consumes vital assets and might sluggish the tempo of innovation. Automation affords a path to scalability.

Third, management groups more and more view know-how efficiency as straight tied to enterprise outcomes. Dependable releases cut back disruption, enhance buyer expertise, and assist development initiatives.

Trade drivers

Insurance coverage techniques are rising extra interconnected yearly. Integrations with analytics platforms, third-party information suppliers, and digital channels create new dependencies that have to be validated persistently.

On the similar time, many insurers are adopting steady supply practices. This shift will increase the frequency of change, making scalable validation important. By scalable validations, we imply the power to check and make sure software program high quality effectively as techniques, integrations, and launch quantity develop with no need to proportionally enhance handbook effort.

The insurance coverage trade is shifting on this route towards a transparent conclusion: high quality have to be constructed constantly into the supply course of fairly than handled as a separate, late-stage testing section.

Fashionable high quality engineering begins on the improvement stage. AI-powered code overview instruments assist groups enhance high quality in the meanwhile code is written by figuring out potential points, implementing requirements, and offering instant suggestions.

This early-stage focus helps QA practices by decreasing the variety of defects that attain downstream testing phases. Builders obtain sooner insights, reviewers spend much less time on repetitive checks, and organizations preserve extra constant coding practices throughout groups. In apply, many improvement groups have discovered that AI-assisted overview considerably reduces the time and assets required for handbook code overview, minimizes human oversight errors, and supplies a quick, cost-efficient technique to validate code earlier than it progresses additional within the lifecycle.

AI-powered code overview allows groups to scale high quality with out proportionally growing overview effort — liberating skilled engineers to concentrate on higher-value architectural and design selections.

Options like these additionally assist governance by offering transparency into code high quality and serving to organizations standardize improvement practices.

Increasing high quality past code

As insurers proceed to modernize, they’re recognizing that high quality can’t be ensured by testing actions alone. In complicated insurance coverage platforms, most of the most expensive dangers stem from collected technical debt, architectural drift, safety gaps, and rising code complexity that stay invisible till they trigger supply slowdowns, improve failures, or manufacturing incidents.

High quality engineering, due to this fact, extends past particular person code validation to steady testing and system-level perception. Automated regression testing ensures that new updates don’t affect present performance, whereas integration and workflow testing verify that complicated enterprise processes proceed to perform as anticipated throughout interconnected techniques.

On the similar time, organizations more and more want steady visibility into the structural well being of their platforms — together with code high quality traits, safety publicity, architectural consistency, and maintainability dangers throughout the supply lifecycle.

Constructing steady high quality throughout the lifecycle

- High quality begins with higher code.

- Validation extends via testing and integration.

- Supply turns into predictable and scalable.

When mixed, early-stage AI-powered code overview and lifecycle high quality assurance automation create a steady high quality mannequin.

This mannequin permits insurers to align know-how supply with enterprise expectations with out sacrificing reliability.

It additionally creates stronger collaboration between engineering and QA capabilities. As a substitute of working in separate phases, improvement and testing work collectively as a part of a unified high quality technique.

For know-how leaders, this shift modifications how success is measured. Metrics transfer past testing completion charges towards outcomes resembling diminished defects, improved launch consistency, and sooner supply cycles.

Measuring success in high quality engineering

Organizations adopting end-to-end high quality engineering usually concentrate on measurable outcomes resembling:

- Decreased regression testing time

- Decrease defect charges after launch

- Elevated launch frequency with secure efficiency

- Higher visibility into high quality traits throughout groups

- Improved productiveness via automation

Insurers must be measuring and monitoring these metrics on an ongoing foundation, utilizing them to judge supply efficiency, establish enchancment alternatives, and make sure that modernization efforts are producing constant, dependable outcomes. These outcomes translate straight into enterprise worth by decreasing operational friction, enhancing launch confidence, and supporting extra predictable know-how supply.

Importantly, high quality engineering additionally helps higher governance. Automated processes create consistency and traceability, which might be particularly precious in regulated industries like insurance coverage.

As insurers modernize their merchandise and operations, supply functionality is turning into a key differentiator. Expertise groups that may ship innovation reliably and shortly achieve a bonus by enabling sooner product launches, higher buyer experiences, and extra agile responses to market change. Finish-to-end high quality engineering makes this potential by guaranteeing that velocity doesn’t come on the expense of reliability. More and more, forward-looking insurers view high quality engineering as a strategic functionality, one which helps steady enchancment, scalable supply, and long-term competitiveness in a fancy trade like insurance coverage.