A recent study by cybersecurity agency Lakera reveals that AI coding assistants like Claude Code are inadvertently hoarding and leaking delicate API keys throughout public package deal releases. Whereas these instruments speed up the software program improvement lifecycle, in addition they introduce hidden vulnerabilities into the automated software program provide chain.

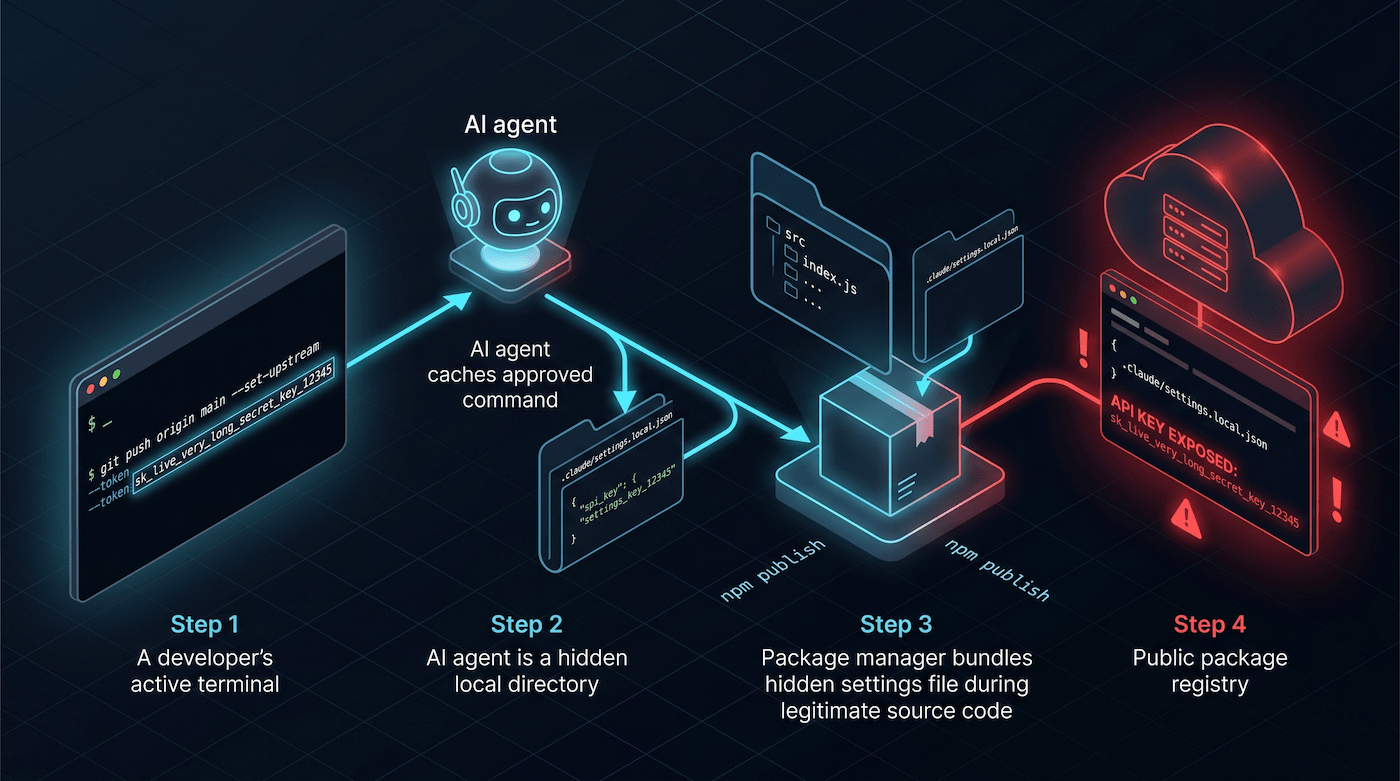

Claude Code caches accredited terminal instructions in a hidden native file. When a developer selects an “enable all the time” choice to bypass repetitive prompts, any credentials handed inside that command turn out to be completely saved on the native machine. If the developer publishes the mission to a public registry with out explicitly ignoring this hidden listing, these saved API keys ship globally alongside the supply code.

Trade consultants emphasize the novelty and scale of this danger as AI brokers transfer deeply into developer workflows. This implies AI software corporations should adapt their instruments to this new actuality. On the identical time, builders should take measures to keep away from exposing their software program libraries to the threats posed by AI coding instruments.

“AI tooling is evolving at breakneck velocity, and in some ways, that is essentially the most software program we’ve ever seen created and deployed with out mature safe defaults each within the generated code itself and within the surrounding developer setting,” Steve Guiguere, Principal AI Safety Advocate at Test Level Software program, instructed TechTalks.

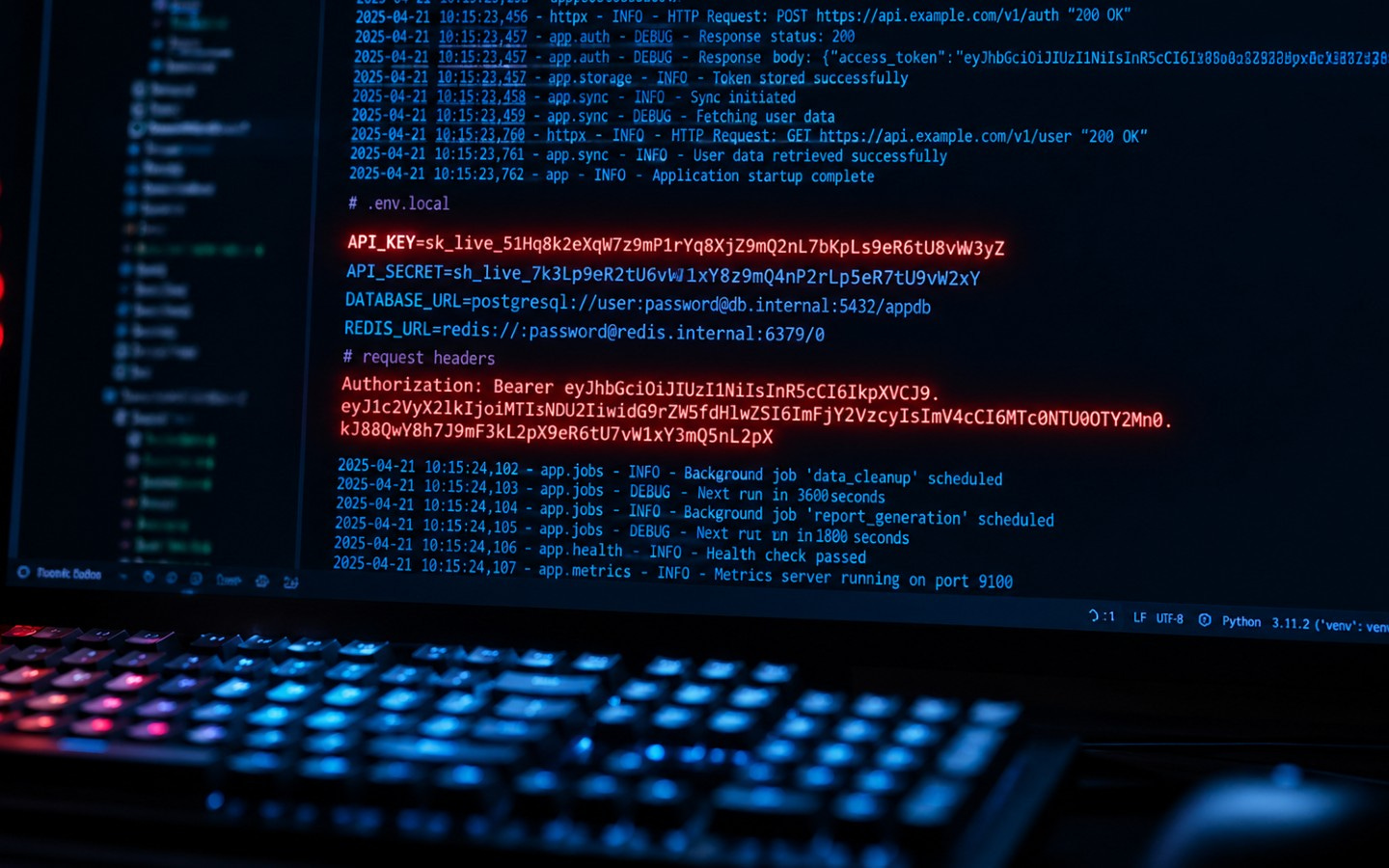

Claude Code operates utilizing a strict permission system for shell instructions. When the assistant makes an attempt to run a command it has not executed earlier than, it presents the developer with authorization choices. Choosing “enable all the time” writes the precise command string to a hidden file situated at “.claude/settings.native.json” inside the root of the mission listing.

Builders routinely execute authenticated API calls, run deployment scripts, or log into cloud companies straight from the terminal. If an setting variable or API key’s prepended to certainly one of these instructions, the AI agent logs it as a everlasting allowlist entry. The agent is functioning precisely as designed, remembering state to scale back friction. However on the identical time, it creates a static file of delicate information.

The publicity happens through the package deal publishing part. Package deal managers like npm construct distribution archives straight from the contents of the mission listing. The “.claude/” folder acts equally to a “.env” file, signaling that it comprises private, environment-specific information. Nonetheless, it lacks the widespread ecosystem consciousness that usually prevents setting information from delivery. Construct instruments exclude information by way of “.npmignore” or the “information” area in a “package deal.json,” however neither mechanism excludes the “.claude/” listing by default.

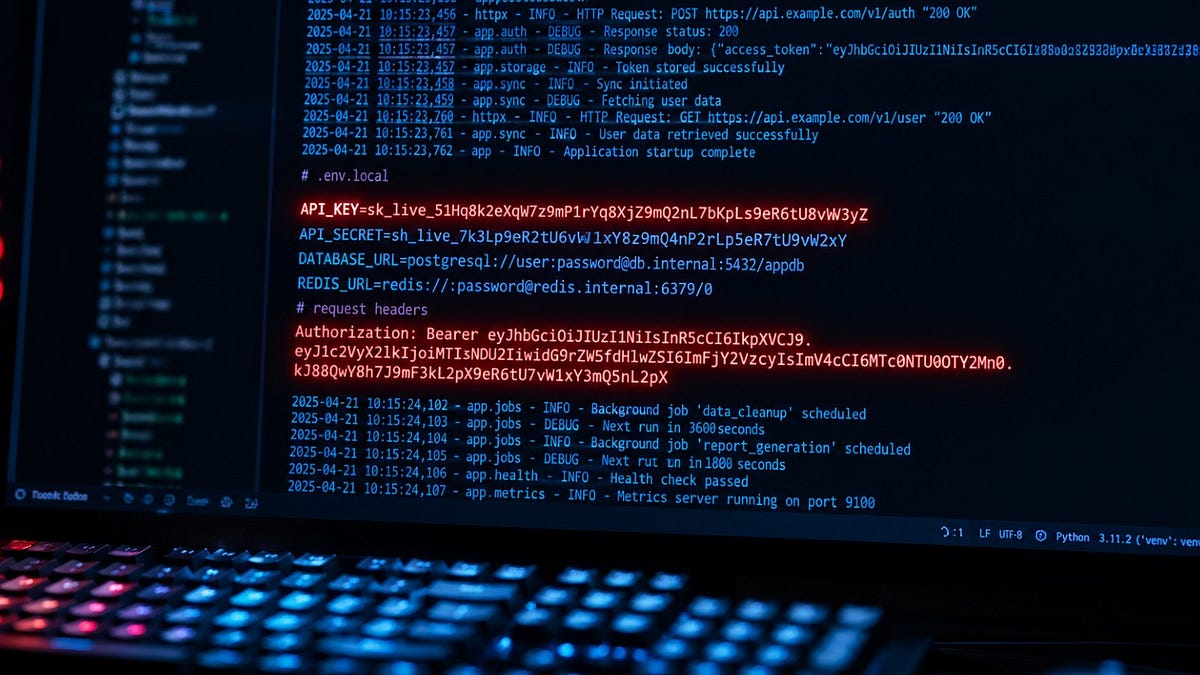

To measure the influence, Lakera constructed a service monitoring the npm registry’s adjustments feed. Throughout a scan window of roughly 46,500 packages, the agency recognized 428 packages containing a “.claude/settings.native.json” file. Of these, 33 information throughout 30 packages contained reside credentials. Roughly one in 13 of the shipped settings information uncovered delicate information to the general public.

Conventional automated safeguards steadily miss these exposures. Present secret scanning instruments like GitHub Superior Safety are extremely efficient at discovering identified credential patterns inside supply code and model management histories. “This case is completely different as a result of the credentials are embedded inside an AI software’s native settings file as a part of accredited shell command strings,” Guiguere mentioned. The AI assistants have created solely new places the place secrets and techniques quietly accumulate exterior the view of established safety workflows.

The underlying vulnerability impacts any ecosystem that packages information from a mission listing. Construct instruments for Python supply distributions (PyPI), RubyGems, and Maven all choose information and publish archives primarily based on listing contents, carrying the identical publicity danger if the hidden “.claude/” listing is seen to the packaging course of, in accordance with Lakera.

Whereas widespread, automated exploitation of those particular information is just not but public, proactive analysis proves the potential exists. “If researchers can determine a repeatable technique to uncover uncovered credentials in public registries, we now have to imagine adversaries can do the identical, and sure will,” Guiguere warned. “In safety, the suitable assumption is commonly that after a weak point is sensible and economically attention-grabbing, it’ll finally be operationalized.”

Builders can instantly mitigate this danger by manually including the “.claude/” listing to their “.npmignore” and “.gitignore” information. Moreover, package deal managers provide preview mechanisms that enable builders to examine an archive earlier than it goes reside. Operating instructions like “npm pack –dry-run” or utilizing equal artifact inspection instruments in different languages ensures that hidden AI state information are excluded from the ultimate launch.

For creators of AI instruments, routinely producing or updating these ignore information through the software’s initialization would act as a robust secure-by-default mechanism. Regardless of the logic of this strategy, the business is trending towards a cloud-style shared accountability mannequin, in accordance with Guiguere.

“The platform might be securable, however not essentially safe by default,” he mentioned. “Suppliers will provide mechanisms and steering, whereas builders and enterprises stay accountable for configuring and implementing protections round these instruments.”

This can be a sample we’ve seen in different safety incidents, corresponding to a lately reported vulnerability in Model Context Protocol (MCP) that uncovered computer systems to distant code execution (RCE) assaults. The maintainers of the protocol and MCP instruments mentioned this was a function and that builders are accountable for stopping such incidents. Placing the suitable steadiness between flexibility and safety stays an unsolved drawback in AI instruments, and in the intervening time, builders should be tremendous cautious.

However counting on particular person builders to recollect handbook file updates scales poorly throughout giant organizations. Enterprises require automated preventative guardrails constructed straight into their software program supply pipelines. Platform engineering groups ought to implement coverage checks that routinely fail builds if “.claude/” or comparable agent directories seem in publishable artifacts.

The presence of native AI brokers additionally introduces a brand new endpoint safety dimension. As a result of instruments like Claude Code run regionally, they create danger on the developer workstation lengthy earlier than code ever reaches a registry. Enterprises want endpoint controls able to auditing improvement directories the place delicate agent state accumulates, shifting the burden of safety from particular person developer hygiene to managed enterprise controls.

The mixing of always-watching AI brokers forces a basic shift in how the business views command-line hygiene. Traditionally, passing an API key straight into a neighborhood terminal command by way of a software like curl was comparatively protected as a result of native bash histories are hardly ever packaged and revealed.

AI coding assistants disrupt that mannequin. “If an AI agent is watching and recording operational context, builders have to cease considering of the terminal as purely ephemeral,” Guiguere mentioned. “AI coding assistants change that mannequin as a result of they observe, approve, bear in mind, and typically persist instructions as a part of their working mannequin.”

To harden techniques towards inadvertent credential hoarding, engineers should acknowledge that an AI agent operating on a desktop is an software runtime. It requires the identical architectural self-discipline anticipated of cloud infrastructure. Future safety fashions would require sandboxing brokers, mounting solely the precise directories they should operate, and strictly implementing the precept of least privilege.

The place secrets and techniques are required for advanced workflows, they should be retrieved dynamically from managed secret shops or secret managers utilizing scoped, short-lived entry, relatively than counting on hard-coded credentials embedded in repeatable command-line approvals.