Artificial intelligence (AI) has revolutionized countless industries, from healthcare to finance. One of the key drivers of this progress is neural networks, a powerful tool that enables machines to learn and solve complex problems. This beginner’s guide will introduce you to the fascinating world of neural networks, exploring their core concepts, types, and applications.

Introduction to Neural Networks

What are Neural Networks?

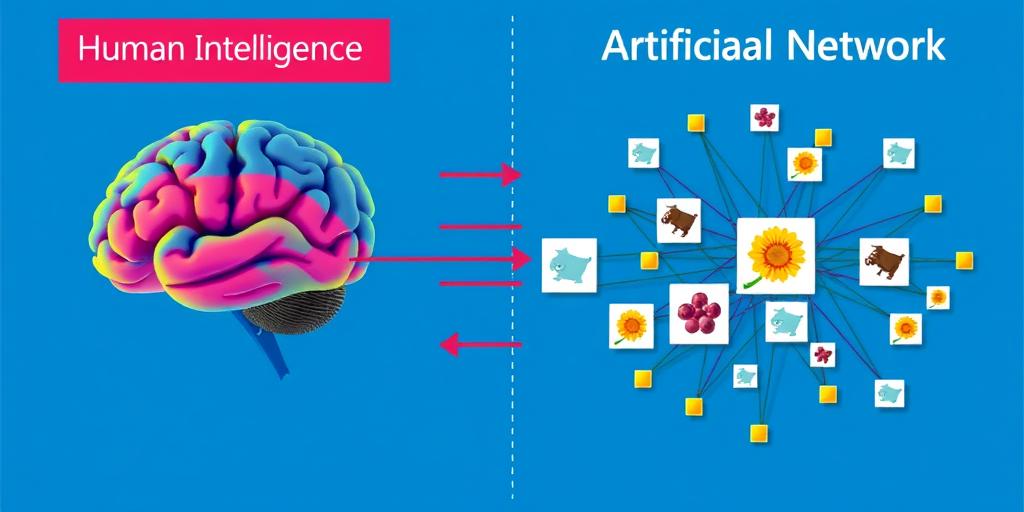

Neural networks are a type of machine learning algorithm inspired by the structure and function of the human brain. They consist of interconnected nodes, called neurons, arranged in layers. Each connection between neurons has a weight associated with it, representing the strength of the connection. These weights are adjusted during the learning process, allowing the network to adapt and improve its performance.

The Inspiration Behind Neural Networks

The concept of neural networks stems from the desire to mimic the remarkable ability of the human brain to learn and adapt. Just as neurons in the brain communicate with each other through electrical signals, artificial neurons in a neural network process and transmit information through weighted connections.

Key Components of a Neural Network

A typical neural network comprises several key components:

- Input Layer: This layer receives the raw data that the network will process.

- Hidden Layers: These layers perform intermediate calculations, extracting complex features from the input data.

- Output Layer: This layer produces the final output of the network, based on the processed information.

- Weights: These values represent the strength of the connections between neurons.

- Activation Functions: These functions determine the output of a neuron based on its input and weight.

Types of Neural Networks

Neural networks come in various architectures, each suited for different tasks. Let’s delve into some of the most common types:

Feedforward Neural Networks

These networks are the simplest form of neural networks, where information flows in one direction from the input layer to the output layer, without any cycles or loops. Feedforward networks are often used for classification and regression tasks, such as image classification or predicting stock prices.

Convolutional Neural Networks (CNNs)

CNNs are particularly well-suited for processing image and video data. They employ convolutional filters to extract features from the input data, such as edges, shapes, and textures. CNNs have achieved remarkable success in tasks like image recognition, object detection, and medical imaging analysis.

Recurrent Neural Networks (RNNs)

RNNs are designed to handle sequential data, such as text, speech, or time series. They have feedback loops, allowing them to process information over time and retain memory of past events. RNNs are widely used in natural language processing tasks like machine translation, sentiment analysis, and speech recognition.

Long Short-Term Memory (LSTM) Networks

LSTMs are a type of RNN that addresses the vanishing gradient problem, a common issue in traditional RNNs. They have special gates that control the flow of information, allowing them to learn long-term dependencies in data. LSTMs are highly effective in tasks involving complex sequences, such as machine translation, text summarization, and time series forecasting.

How Neural Networks Learn

Training Data and Backpropagation

Neural networks learn by adjusting their weights based on a set of labeled training data. The process of learning involves feeding the network with input data and comparing its output to the expected output. The difference between the predicted and actual output is then used to update the weights using a technique called backpropagation.

Activation Functions

Activation functions are mathematical functions that introduce non-linearity into the network. They determine the output of a neuron based on its weighted sum of inputs. Common activation functions include sigmoid, ReLU, and tanh.

Loss Functions and Optimization

Loss functions quantify the error between the predicted output and the actual output. The goal of training is to minimize the loss function, which is achieved through optimization algorithms like gradient descent. Optimization algorithms adjust the weights in the network to reduce the loss and improve the network’s accuracy.

Applications of Neural Networks in AI

Neural networks have revolutionized various fields within AI, enabling machines to perform tasks that were previously thought to be exclusive to humans.

Image Recognition and Computer Vision

Neural networks, particularly CNNs, have achieved impressive results in image recognition tasks. They can identify objects, faces, and scenes in images with high accuracy. Applications include self-driving cars, medical imaging, and security systems.

Natural Language Processing (NLP)

RNNs and LSTMs have made significant strides in NLP, allowing machines to understand and process human language. Applications include machine translation, text summarization, sentiment analysis, and chatbot development.

Machine Translation

Neural machine translation systems, powered by RNNs and LSTMs, have significantly improved the quality of machine-generated translations. These systems can translate between multiple languages with greater accuracy and fluency.

Robotics and Autonomous Systems

Neural networks play a crucial role in the development of robots and autonomous systems. They enable robots to learn from their environment, adapt to changing conditions, and make decisions based on sensory input. Applications include industrial automation, healthcare robotics, and self-driving vehicles.

The Future of Neural Networks

The field of neural networks is constantly evolving, with ongoing research and development pushing the boundaries of what machines can achieve.

Advancements in Deep Learning

Deep learning, a subfield of machine learning that focuses on building and training deep neural networks, is rapidly advancing. Researchers are exploring new architectures, training techniques, and applications, leading to breakthroughs in various areas, including natural language understanding, image generation, and drug discovery.

Ethical Considerations in AI

As AI becomes more sophisticated, it raises ethical concerns about bias, fairness, and transparency. Researchers and developers are working to address these issues, ensuring that AI systems are developed and deployed responsibly.

The Potential Impact on Society

Neural networks have the potential to reshape society in profound ways. They can automate tasks, create new industries, and improve quality of life. However, it’s crucial to consider the potential impact on jobs, privacy, and societal norms as AI continues to evolve.

Neural networks are a powerful tool that is driving innovation across various fields. Understanding the fundamentals of neural networks is essential for anyone interested in AI, machine learning, or the future of technology. By exploring the concepts, types, and applications of neural networks, we can gain insights into the transformative potential of this technology.