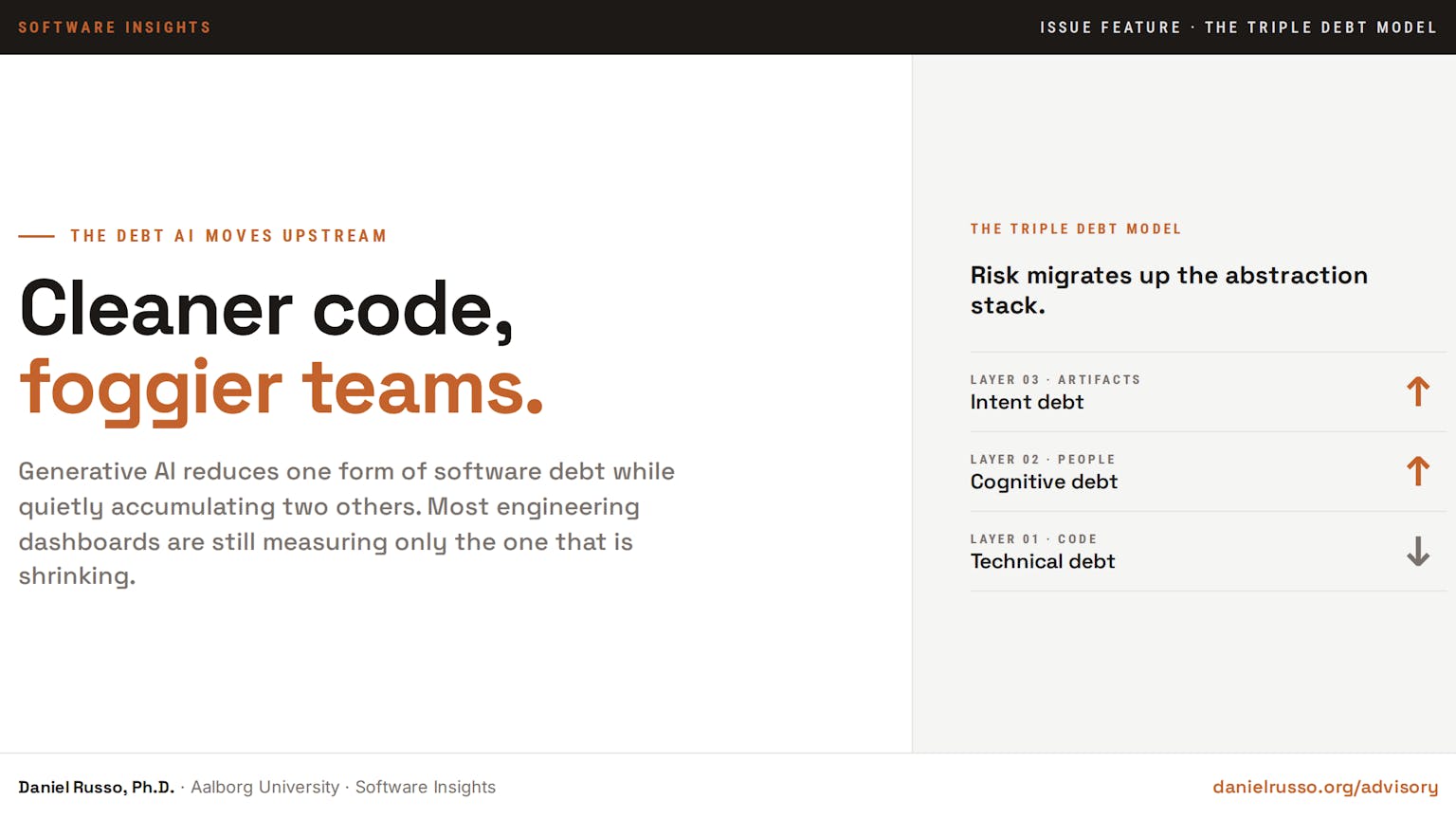

Generative AI is lowering one type of software program debt whereas quietly accumulating two others, and most engineering organisations are nonetheless measuring solely the one that’s shrinking.

The dominant business framing treats AI-assisted improvement as a productiveness story. Code arrives quicker, then refactoring occurs routinely, and at last scent detectors and take a look at turbines take up work that beforehand sat on engineers’ backlogs. Each consulting agency with a slide deck has documented some model of that achieve. What none of these decks measures is what occurs to the layers above the code: the group’s shared understanding of why the system behaves because it does, and the externalised rationale that people and AI brokers alike have to motive about protected change.

A latest paper from Margaret-Anne Storey on the College of Victoria offers that sample a reputation and a construction (Storey, 2026). The Triple Debt Mannequin proposes that software program well being is dependent upon three coupled layers, every capable of erode independently. Technical debt lives in code. Cognitive debt lives in individuals. Intent debt lives in artifacts. Generative AI is shrinking the primary whereas accelerating the buildup of the opposite two, and the traditional metrics most groups report don’t register the second-order results.

Every of the three layers degrades by completely different mechanisms, responds to completely different interventions, and produces completely different operational signatures. A group monitoring solely the code layer can present enhancing metrics throughout each dashboard whereas accumulating threat on the layers above. The redistribution just isn’t a future concern, however it’s a current situation, and it’s the structural motive that AI productiveness positive aspects are usually not translating into the operational enhancements organisations anticipated after they adopted the instruments. The argument that follows takes the three layers in flip.

How the technical debt assemble decouples

The technical debt assemble has been with the sector since Cunningham (1993) used a monetary metaphor to clarify why fast-shipped code accrues future value. Kruchten et al. (2012) sharpened the assemble right into a analysis programme that distinguished principal from curiosity and modelled the trade-off between supply velocity and upkeep burden. For 3 a long time, the assemble did substantial work. It justified funding in refactoring, gave engineers a shared vocabulary for explaining shortcuts to non-technical stakeholders, and knowledgeable measurable practices starting from static evaluation dashboards to devoted remediation sprints.

What Storey (2026) identifies is that the assemble, as practiced, conflates three distinct phenomena that AI now decouples in manufacturing.

-

Debt within the code itself: structural shortcuts, weak abstractions, lacking exams.

-

Debt within the group‘s collective understanding of that code.

-

Debt within the specific objectives, rationale, and constraints captured in artifacts the group and its brokers can seek the advice of.

In a pre-AI improvement regime, these three layers tended to maneuver collectively. A group that took on technical debt sometimes additionally degraded its shared understanding of the affected modules and let intent seize lapse. Decreasing one tended to imply lowering all three. The metaphor held.

Generative AI breaks that coupling. Hou et al. (2024) doc giant language fashions accelerating refactoring, scent detection, and take a look at era throughout a number of manufacturing settings. Peng et al. (2023) report substantial productiveness positive aspects for engineers utilizing GitHub Copilot, with the biggest positive aspects concentrated in routine code era duties. The case that AI lowers technical debt accumulation, at the least for the seen code layer, is empirically defensible. The system will get cleaner as measured by static evaluation. CI pipelines keep inexperienced, and take a look at protection edges upward.

The issue is what occurs elsewhere whereas the code layer cleans up. The group’s shared understanding of why the code appears the best way it appears doesn’t enhance when an agent writes the code. Usually, it deteriorates as a result of no engineer was required to construct the psychological mannequin that produced the artifact. The choice rationale for the agent’s output additionally doesn’t enter any consultable artifact until somebody intentionally captures it, and the very velocity benefit of agent-assisted improvement cuts in opposition to deliberate rationale seize.

The result’s a divergence: code high quality improves whereas group information and externalised intent each erode.

The three-decade behavior of treating debt as a single amount now produces measurement errors of reverse indicators.

The place the cognitive layer erodes

Storey defines cognitive debt as a team-level, project-level property reflecting the erosion of shared understanding throughout a software program system over time, manifesting as more and more insufficient psychological fashions that builders depend on to motive concerning the system and alter it safely. The assemble is grounded in three items of foundational work that newer software program engineering analysis has tended to underuse.

Naur (1985) argued that programming is, basically, the development of a principle of the system within the heads of the individuals constructing it. The code is one externalisation of that principle; documentation is one other; exams are a 3rd. None of them is the speculation itself. When the individuals who constructed the speculation go away, or when the system grows quicker than their psychological mannequin can develop with it, the speculation degrades even when the artifacts persist. Curtis et al. (1988), in a discipline examine of seventeen giant software program initiatives, discovered that no single developer held the whole image of any non-trivial system; the speculation was distributed throughout the group in overlapping fragments. Wegner (1987) offered the cognitive science framing for that distribution: teams develop a transactive reminiscence wherein every member tracks not solely what they know however who else is aware of what. When transactive reminiscence degrades, the group loses the power to retrieve its personal information, even when particular person heads nonetheless comprise items of it.

Hutchins (1995) prolonged the framing to distributed cognition extra broadly. Cognitive processes, in complicated socio-technical methods, are properties of individuals, artifacts, and their interactions, not of particular person minds in isolation. A software program group’s reasoning capability is a property of the entire configuration: engineers, code, documentation, dashboards, on-call rotations, post-mortems, and the casual channels by which they impart. Cognitive debt accumulates when any factor of that configuration degrades quicker than the others can compensate.

The empirical indicators that AI-assisted improvement is accelerating this erosion are beginning to converge. Kosmyna et al. (2026) doc measurable reductions in neural engagement throughout AI-assisted writing duties and report a rebound impact: individuals who relied on AI help retained much less of the produced content material and had been much less capable of recall their very own reasoning when later requested to defend it. Shaw and Nave (2026) describe a associated sample they name cognitive give up, wherein customers of AI-assisted determination instruments report inflated confidence in conclusions even when the AI was demonstrably mistaken. The mix is the worst attainable diagnostic configuration: decrease engagement for the time being of manufacturing, increased confidence for the time being of evaluation. The psychological mannequin that ought to have been constructed throughout manufacturing is absent for the time being it’s wanted for verification.

Storey’s redistribution thesis crystallises the implication for software program engineering organisations:

Generative AI could scale back technical debt whereas concurrently accelerating the buildup of cognitive and intent debt (Storey, 2026).

That single sentence reframes many of the productiveness discourse round AI-assisted improvement. The positive aspects are actual, however they arrive at a layer of the stack that has well-developed devices and a long time of measurement observe.

The buildup arrives at layers the place most organisations don’t have any devices in any respect.

Russo’s (2026) section transition argument provides a structural prediction to Storey’s analysis. Agent-intensive ecosystems bear a discontinuity at an agent-to-engineer ratio of roughly three to 1, after which ecosystem-level behaviour turns into extra informative than individual-agent behaviour for understanding the system. Under that threshold, cognitive oversight stays tractable: engineers can nonetheless reconstruct the choice logic of agent-generated adjustments at an affordable value. Above it, the system’s properties of curiosity emerge from interactions somewhat than residing in any single agent or commit, and the group’s transactive reminiscence can’t hold tempo. The comprehension debt assemble launched in final week’s problem, The Composition Lure (Russo, 2026), names the operational consequence on the artifact stage: code that passes automated verification whereas remaining past the group’s reproducible determination logic.

The 2 frames should be seen as complementary. Storey’s cognitive debt names the accrued team-level erosion of shared understanding noticed from contained in the cognitive system. Russo’s comprehension debt names the failure mode that erosion produces on the artifact stage: code that the group can’t mentally simulate. One body is grounded in distributed cognition; the opposite in complexity-science emergence. Collectively, they describe each the trigger and the consequence of the identical upstream redistribution.

The intent hole that artifacts can’t shut

Intent debt is the layer most newly named in Storey’s framework, and it’s the one that the majority straight reframes how groups ought to take into consideration AI brokers. Storey describes intent debt because the absence or erosion of specific rationale, objectives, and constraints that information how a system evolves. The place cognitive debt resides in heads, intent debt resides within the hole between what the group meant to construct and what’s captured in any artifact a human or an agent can seek the advice of.

The assemble positive aspects explicit pressure in agent-intensive workflows as a result of AI brokers at the moment are first-class shoppers of the intent layer. When an engineer holds the rationale of their head, they’ll apply it implicitly: a code evaluation in opposition to an unwritten efficiency finances nonetheless catches the regression as a result of the reviewer remembers the finances exists. When an agent writes the identical code, the finances is invisible until it seems in an artifact the agent reads. The result’s a category of failure modes that resemble mannequin limitations however are literally intent-debt incidents masquerading as functionality gaps. The agent produces technically right however pointless options, requests in depth rounds of clarification, or burns tokens by reasoning from scratch on questions {that a} single determination file may have answered. Every of those is a measurable sign of intent debt on the agent boundary.

Storey identifies a number of diagnostic signatures. Behaviour drift from stakeholder expectations, even when the code is technically right, factors to misplaced or never-captured constraints. Lack of articulated efficiency budgets, privateness commitments, and accessibility necessities is a traditional sample: the constraints exist in somebody’s reminiscence and are honoured when that individual critiques adjustments, however they disappear from the system the second that individual is unavailable. Onboarding value rises as a result of new engineers and new brokers don’t have any documented principle of what the system is for, solely of what it presently does. The 2 are usually not the identical.

The mitigations Storey proposes are of a various nature.

-

Behaviour-driven specs and exams that seize goal somewhat than solely verifying mechanics.

-

Structure determination information that externalise the why alongside the what.

-

Area-driven design vocabulary that offers the group a shared language for speaking about constraints.

The shared attribute is that every one of them produce artifacts which are agent-readable in the identical method they’re human-readable. That is the operational definition of context engineering, i.e., the observe of manufacturing directions and rationale dense sufficient that an agent given entry to them produces outputs aligned with group intent while not having the rationale to be re-derived in each interplay.

The twin relationship between comprehension debt and intent debt makes the mixture helpful for diagnostic functions. Comprehension debt is the group’s failure to reconstruct the rationale that an agent produced. Intent debt is the group’s failure to externalise the rationale that the agent then has to invent. These are inverse failure modes, and so they have a tendency to supply inverse organisational pathologies. Groups with excessive comprehension debt accumulate change-resistant code that nobody needs to the touch. Groups with excessive intent debt accumulate agent failures that the group blames on the mannequin somewhat than by itself lacking specs. Diagnosing which type dominates is step one towards choosing the proper intervention.

The 30-minute audit

The next diagnostic surfaces the three debt sorts in a single group session. Every merchandise is designed to take a couple of minutes; the total record runs in roughly thirty.

1. Pull a pattern of ten AI-generated commits merged within the final dash. For every, ask the on-call engineer to reconstruct the choice logic in below 5 minutes. Rely what number of they can not. The fraction is your near-term comprehension debt fee.

2. Open your most up-to-date structure determination file. Notice the date. Whether it is greater than six weeks previous in a group transport each day, you’re accumulating intent debt on the documentation layer quicker than you’re paying it down.

3. Checklist the constraints presently lively in your service: efficiency budgets, privateness commitments, accessibility necessities, safety invariants, and regulatory thresholds. Verify that are captured in artifacts that brokers can seek the advice of versus these held solely in somebody’s reminiscence. Something in heads alone is a cognitive debt threat; something lacking from artifacts is an intent debt threat.

4. Run a transactive reminiscence test. Ask every engineer who else on the group understands the realm they personal most deeply. If the reply is nobody, that’s cognitive debt on the bus-factor stage, and it’s the most costly type to remediate after the actual fact.

5. Assessment the final three incidents. Establish which had been attributable to code behaving as written however not as supposed. That ratio is your intent debt fingerprint, and it’s sometimes under-reported as a result of incident critiques give attention to the proximate trigger somewhat than the lacking specification.

6. Audit your most up-to-date 5 AI agent failures. Rely what number of resulted from lacking rationale somewhat than lacking functionality. Reclassifying functionality failures as intent debt incidents adjustments which intervention reduces them.

7. Calculate the imply time to clarify for the newest vital AI-generated module: how lengthy does a senior engineer have to reconstruct its reasoning? Something past twenty minutes indicators comprehension debt on the boundary; something past an hour is structural.

8. Look at your onboarding metrics for the final two new hires. Time to first significant contribution past anticipated ranges suggests the group’s shared principle of the system is not transmissible at an appropriate value. The identical metric measures whether or not new brokers might be onboarded cheaply.

9. Survey your senior engineers anonymously: Do you are feeling much less capable of predict the system’s behaviour than you probably did six months in the past? A constant sure indicators cognitive debt accumulation that no static evaluation software will detect.

10. Map intent seize in your present dash planning. If selections are made in conferences and never externalised into ADRs, behaviour-driven specs, or agent-readable directions earlier than code is generated, intent debt is being created in actual time, and you haven’t but constructed the observe that pays it down.

11. Observe your agent-to-engineer ratio. Above roughly three to 1 (Russo, 2026), individual-agent metrics turn into unreliable as guides to system behaviour, and the diagnostic objects above must be run at a better cadence somewhat than month-to-month.

Subsequent strikes

For the builder

When reviewing AI-generated code, separate two judgments explicitly: did the output fulfill the take a look at, and may I reconstruct the choice logic? File each. Approving of the primary with out the second is the operational definition of contributing to comprehension debt, and the cognitive give up sample documented by Shaw and Nave (2026) means you’ll are likely to really feel extra assured than the proof warrants. Construct the self-discipline of writing determination rationale into commit messages and structure determination information earlier than merging vital AI-generated adjustments; seize the why alongside the what. When utilizing AI brokers straight, rewrite agent directions every time clarification rounds exceed two: the failure level is the lacking intent, not the mannequin. Deal with your individual private review-without-reproduction ratio as a number one indicator of the comprehension debt you’re personally contributing to the codebase.

For the supervisor

Introduce a cadenced observe that explicitly targets cognitive debt. Storey calls these system walkthroughs: periods the place one engineer narrates part of the system to others, together with components they didn’t write. The observe rebuilds the group’s transactive reminiscence with out ready for an incident to pressure the rebuilding, and Naur (1985) suggests it’s the solely mechanism that genuinely transfers the speculation of the system somewhat than its floor artifacts. Reframe code evaluation as principle transmission, not simply defect detection: a evaluation that catches a bug whereas leaving the reviewer unable to reconstruct the change’s reasoning has solved one drawback and worsened one other. Observe the ratio of choices captured in ADRs versus selections made in conferences; that ratio is your intent seize fee, and it’s the main indicator of whether or not your group is constructing agent-readable artifacts or solely agent-consumable code. Watch your on-call escalation fee as a major sign of emergent system behaviour somewhat than as a measure of particular person engineer competence.

For the roadmap proprietor

The Triple Debt Mannequin implies that the metrics most organisations report on AI adoption are partial by building. Productiveness positive aspects in code era needs to be reported alongside three corresponding indicators: cognitive debt (imply time to clarify, on-call escalation traits, senior-engineer confidence), intent debt (rationale seize fee, ADR forex, agent clarification spherical counts), and the agent-to-engineer ratio that circumstances all three. Fee an audit that maps your AI governance framework in opposition to these layers, not in opposition to generic threat taxonomies; present frameworks designed for particular person mannequin analysis don’t floor the team-level erosion that produces operational threat. Funds for measurement infrastructure on the cognitive and intent layers earlier than scaling agent deployment additional. The Storey (2026) and Russo (2026) frameworks collectively counsel that organisations that scale agent counts with out instrumenting these layers will see preliminary productiveness positive aspects adopted by a delayed however predictable rise in incident severity, with the lag obscuring the causal relationship for lengthy sufficient that the unique adoption determination is never revisited.

Closing thought

The story most boards are being informed about AI-assisted software program improvement is a narrative about cleaner code shipped quicker. The story Storey and Russo collectively describe is extra related however more durable to handle. Software program threat is migrating up the abstraction stack, from the code layer the place a long time of tooling have accrued, into the group’s shared understanding and the rationale captured in artifacts, the place most organisations don’t have anything comparable in place. The groups that recognise this redistribution early will construct the devices earlier than they want them; the groups that don’t will uncover the buildup by incidents whose causes are straightforward to misattribute to the brokers themselves.

Daniel Russo, Ph.D., is a Professor of Software program Engineering whose analysis examines the intersection of human cognition and synthetic intelligence. By means of “Software program Insights,” he interprets empirical analysis into actionable steering for software program practitioners and organizations.

If this problem surfaces an issue your organisation has been making an attempt to call, I work with engineering leaders to diagnose precisely that type of problem, utilizing the identical strategies behind the analysis you simply learn. No frameworks. No opinion with out proof.

danielrusso.org/advisory

References

Cunningham, W. (1993). The WyCash portfolio administration system. ACM SIGPLAN OOPS Messenger, 4(2), 29-30.

Curtis, B., Krasner, H., & Iscoe, N. (1988). A discipline examine of the software program design course of for giant methods. Communications of the ACM, 31(11), 1268-1287.

Hou, X., Zhao, Y., Liu, Y., Yang, Z., Wang, Ok., Li, L., Luo, X., Lo, D., Grundy, J., & Wang, H. (2024). Massive language fashions for software program engineering: A scientific literature evaluation. ACM Transactions on Software program Engineering and Methodology, 33(8), 1-79.

Hutchins, E. (1995). Cognition within the wild. MIT Press.

Kosmyna, N., Wong, B. R. P., Hauptmann, E. C., Yuan, Y. T. A., Kosmyna, V., & Maes, P. (2026). Your mind on ChatGPT: Accumulation of cognitive debt when utilizing an AI assistant for essay writing. arXiv:2601.00856.

Kruchten, P., Nord, R. L., & Ozkaya, I. (2012). Technical debt: From metaphor to principle and observe. IEEE Software program, 29(6), 18-21.

Naur, P. (1985). Programming as principle constructing. Microprocessing and Microprogramming, 15(5), 253-261.

Peng, S., Kalliamvakou, E., Cihon, P., & Demirer, M. (2023). The affect of AI on developer productiveness: Proof from GitHub Copilot. arXiv:2302.06590.

Russo, D. (2026). Extra is completely different: Towards a principle of emergence in AI-native software program ecosystems. arXiv:2604.19827.

Shaw, J., & Nave, R. (2026). How AI is reshaping human reasoning and the rise of cognitive give up. SSRN 6097646.

Storey, M.-A. (2026). From technical debt to cognitive and intent debt: Rethinking software program well being within the age of AI. arXiv:2603.22106.

Wegner, D. M. (1987). Transactive reminiscence: A up to date evaluation of the group thoughts. In B. Mullen & G. R. Goethals (Eds.), Theories of group conduct (pp. 185-208). Springer.