Machine id is not what it was

One approach to see this clearly is thru id.

Each interplay an AI system has — repository entry, pipeline execution, API calls — requires credentials. In apply, these programs function as machine identities.

However they aren’t conventional machine identities.

A service account executes predefined logic. Its conduct is understood upfront. Its danger is bounded by what it was configured to do.

An AI-driven system is totally different. It generates the logic it then executes.

It might suggest new code paths, work together with new programs and set off actions that weren’t explicitly predefined on the time entry was granted.

That could be a class change.

Not only a new id kind, however a brand new assault floor: Identities that may generate the conduct they’re licensed to execute.

The World Financial Discussion board has recognized this class of non-human identity as one of many fastest-growing and least-governed safety dangers in enterprise AI adoption.

Measuring publicity earlier than fixing it

Most organizations already monitor access-related metrics. These metrics have been designed for human-driven programs.

They’re not ample.

If AI programs are taking part within the software program provide chain, organizations must measure the place and the way that participation introduces danger.

A couple of indicators matter instantly:

- AI-generated artifact footprint: What portion of code, dependencies or infrastructure definitions in manufacturing originates from AI-assisted processes?

- Authority scope of AI programs: What programs can these identities entry — and what actions can they take throughout repositories and pipelines?

- Autonomous change charge: How typically are adjustments launched and propagated with out express human evaluation?

- Cross-system interplay floor: What number of programs does a single AI workflow contact as a part of regular operation?

- Auditability of AI-driven actions: Can adjustments be traced cleanly to a system, workflow and triggering context?

These are usually not summary issues. They’re measurable.

And till they’re measured, they aren’t ruled.

The regulatory crucial

This isn’t only a technical shift. It’s a governance and legal responsibility shift.

As regulatory expectations evolve — from AI accountability frameworks to cybersecurity disclosure requirements — organizations are more and more chargeable for explaining and controlling automated choices inside their environments.

If an AI-driven change introduces a vulnerability or results in a fabric incident, “the system generated it” won’t be an appropriate reply.

Accountability will nonetheless sit with the enterprise.

That raises the bar: Governance should prolong to how autonomous programs act, not simply how they’re accessed.

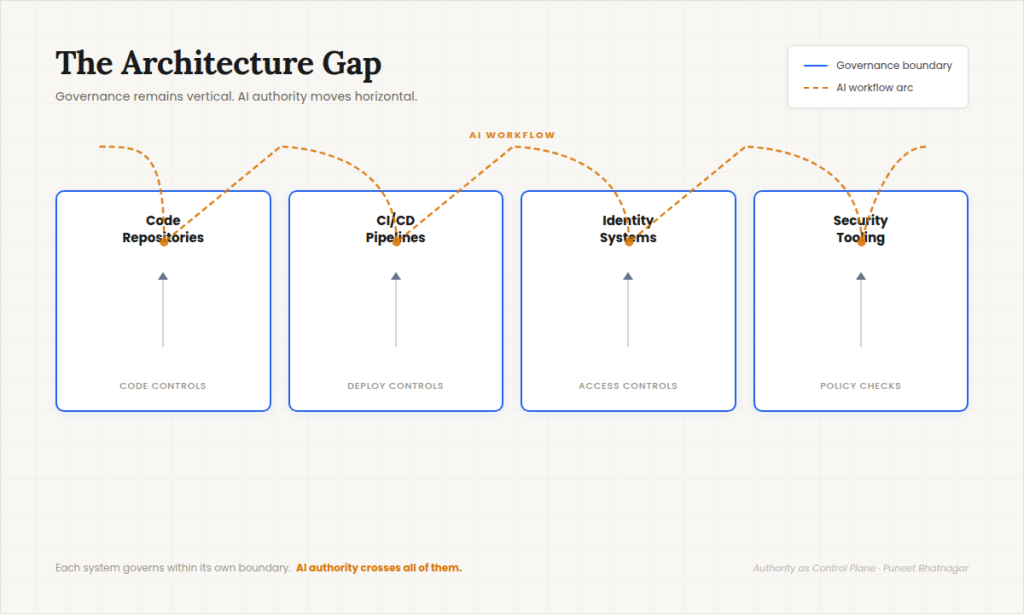

The structure hole

Puneet Bhatnagar

The difficulty shouldn’t be that anybody management is lacking.

It’s that AI programs function throughout the seams of programs designed to control inside their very own boundaries.

Repositories implement code controls.

Pipelines implement deployment controls.

Identification programs implement entry controls.

Safety instruments implement coverage checks.

Every works as designed.

However AI programs transfer throughout all of them.

They learn from one system, generate adjustments, set off one other and affect a 3rd. Authority is exercised throughout programs, whereas governance stays inside them.

That’s the architectural hole.

A special governance mannequin

Most organizations will reply to this shift by attempting to increase present entry controls. That intuition is comprehensible — and inadequate.

The issue is not simply who or what can entry a system. It’s how management is maintained when authority can generate new actions dynamically.

This requires a distinct mannequin of governance.

One which treats software program programs as actors whose conduct should be bounded, noticed and constantly evaluated throughout workflows — not simply permitted or denied at some extent of entry. Governance turns into much less about static permissions and extra about controlling the form and influence of actions throughout programs.

That’s the shift.

Conclusion

The dialog round AI in software program growth typically focuses on productiveness.

However as AI programs start to take part in producing and modifying enterprise software program, the extra essential query turns into governance.

AI isn’t just accelerating the software program growth lifecycle. It’s changing into a part of the software program provide chain itself.

And that adjustments the issue.

The problem for CIOs is not simply managing builders, instruments or pipelines. It’s understanding and governing the authority that software program programs train throughout them.

As a result of in a world the place software program can act on behalf of the enterprise, governance is not nearly entry.

It’s about authority — what programs are allowed to do, and the way that authority is managed and measured over time.

This text is revealed as a part of the Foundry Skilled Contributor Community.

Wish to be part of?