Why speculative decoding?

The technical actuality is that customary LLM inference is memory-bandwidth certain, creating a major latency bottleneck. The processor spends the vast majority of its time transferring billions of parameters from VRAM to the compute models simply to generate a single token. This results in under-utilized compute and excessive latency, particularly on consumer-grade {hardware}.

Speculative decoding decouples token era from verification. By pairing a heavy goal mannequin (e.g., Gemma 4 31B) with a light-weight drafter (the MTP mannequin), we are able to make the most of idle compute to “predict” a number of future tokens directly with the drafter in much less time than it takes for the goal mannequin to course of only one token. The goal mannequin then verifies all of those urged tokens in parallel.

How speculative decoding works

Commonplace massive language fashions generate textual content autoregressively, producing precisely one token at a time. Whereas efficient, this course of dedicates the identical quantity of computation to predicting an apparent continuation (like predicting “phrases” after “Actions converse louder than…”) because it does to fixing a posh logic puzzle.

MTP mitigates this inefficiency via speculative decoding, a method launched by Google researchers in Fast Inference from Transformers via Speculative Decoding. If the goal mannequin agrees with the draft, it accepts your entire sequence in a single ahead go —and even generates a further token of its personal within the course of. This implies your utility can output the total drafted sequence plus one token within the time it often takes to generate a single one.

Unlocking quicker AI from the sting to the workstation

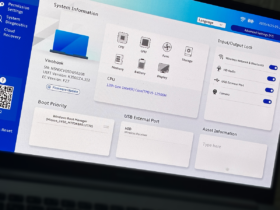

For builders, inference velocity is usually the first bottleneck for manufacturing deployment. Whether or not you’re constructing coding assistants, autonomous brokers that require speedy multi-step planning, or responsive cell functions operating solely on-device, each millisecond issues.

By pairing a Gemma 4 mannequin with its corresponding drafter, builders can obtain:

- Improved responsiveness: Drastically cut back latency for close to real-time chat, immersive voice functions and agentic workflows.

- Supercharged native improvement: Run our 26B MoE and 31B Dense fashions on private computer systems and client GPUs with unprecedented velocity, powering seamless, advanced offline coding and agentic workflows.

- Enhanced on-device efficiency: Maximize the utility of our E2B and E4B fashions on edge units by producing outputs quicker, which in flip preserves useful battery life.

- Zero high quality degradation: As a result of the first Gemma 4 mannequin retains the ultimate verification, you get similar frontier-class reasoning and accuracy, simply delivered considerably quicker.